Cowork Just One-Shotted a Flight. Anthropic's Shell Play.

Cowork on Opus 4.7 booked 8 flights end-to-end. Anthropic's racing the open stack for the agent shell layer — and the 2014 container playbook is back.

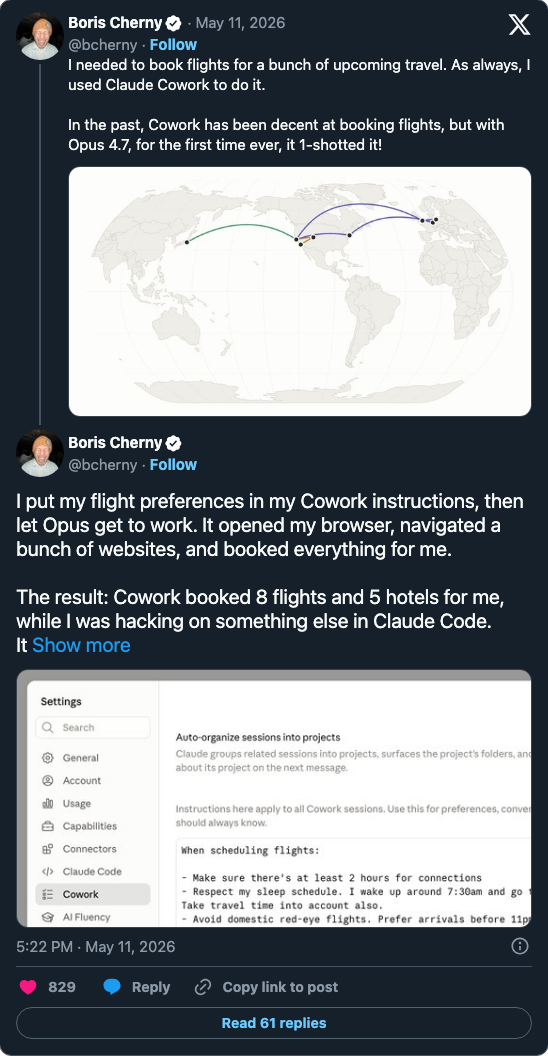

[Boris Cherny](https://x.com/bcherny/status/2053994083497238712), Anthropic's Claude Code lead, posted a tweet on May 12 that's worth reading carefully because it's the inflection nobody quite expected to land this week:

[Boris Cherny](https://x.com/bcherny/status/2053994083497238712), Anthropic's Claude Code lead, posted a tweet on May 12 that's worth reading carefully because it's the inflection nobody quite expected to land this week:

“I needed to book flights for a bunch of upcoming travel. As always, I used Claude Cowork to do it. In the past, Cowork has been decent at booking flights, but with Opus 4.7, for the first time ever, it 1-shotted it!”

The follow-up tweet has the operational detail: Cherny put his preferences in his Cowork instructions, walked away, and Cowork “opened my browser, navigated a bunch of websites, and booked everything.” Eight flights and five hotels, end-to-end, while he was “hacking on something else in Claude Code.” That’s the demo Anthropic has been promising since Cowork launched as a research preview in January 2026 — and it’s the demo that has fallen over mid-task, in front of audiences, more times than anyone at Anthropic would care to count.

This post is not really about a flight booking. It’s about what happens when the Cowork demo finally works the same week Anthropic ships Claude Code Agent View as a research preview. Read the two as a pair and the strategic shape becomes obvious: Anthropic is no longer trying to win only the engine layer of agentic coding — it’s racing to claim the shell layer too, before the open community productizes it first.

That’s the 2014 container-infra playbook running again, and the open stack — memory, observability, linters, multiplexers, skill marketplaces — is racing to fill the same surface.

What the shell layer actually is

When agent runtimes get talked about loosely, “agent” usually means the engine — the Claude Code or Codex or OpenClaw instance that takes a prompt, picks tools, and writes code. But shipping that engine to a developer is not the same as shipping a workflow. In practice, the workflow needs five surrounding things:

-

A multiplexer — a way to run more than one agent session at once and see them in one place. This is what Agent View addresses for Claude Code: a unified screen that surfaces every session — running, blocked on you, or done — with

claude agents. Before Agent View, you opened terminal tabs and tried to remember which one was the bug-fix and which was the PR review. -

Persistent memory — context that survives across sessions, not just within one. Without it, every “long-running” agent is fictional; what’s actually happening is that the human re-pastes the relevant background each morning.

-

Observability — when something goes wrong (and it does — frequently), where do you go to find out why? Logs aren’t enough; you need structured analytics over agent behavior.

-

Output validators — the agent emits work product (code, documents, plans). Something has to verify the quality before that work product hits production. This is a brand-new layer that didn’t exist 90 days ago.

-

A package/distribution surface — the skills, MCP servers, and prompts the agent uses. This is the closest analogue to the npm/Docker Hub layer of the previous infrastructure cycle.

Cowork bundles all five of these, and the engine, and the browser sandbox, into one product. That’s the bet. Until now the bet has looked premature because the engine wasn’t reliable enough to make the shell visible — when Cowork crashed mid-flight-booking, you didn’t experience the multiplexer or the memory or the observability, you experienced the failure. Opus 4.7 changes that. The engine got reliable enough to reveal the shell.

The two-layer push, both shipping the same week

The week of May 12 is a coordinated product release in two parts.

Part one: Cowork productionization. Bcherny’s flight-booking post is the consumer-facing tell. Anthropic’s Cowork product page has been live since January, but until this week the canonical demo was somebody at a conference asking Cowork to do something modestly hard and watching it stall. The 1-shot booking is the moment the canonical demo becomes shareable. Felix Rieseberg, who leads the Cowork and Claude Code Desktop work at Anthropic, has been arguing for over a year that “AI should have its own computer” — a sandboxed VM that the agent fully controls, that doesn’t share state with your laptop. The Cowork architecture is that argument shipped as a product. This week’s flight-booking 1-shot is the proof that the architectural bet pays off.

Part two: Agent View as the multiplexer. Anthropic shipped Agent View on May 11 inside Claude Code 2.1.139 as a research preview. It’s a single-screen dashboard for every Claude Code session you have running — surfacing session ID, whether it’s waiting on you, the last assistant response, and the timestamp of the last turn. You can move an existing session into the background with /bg, kick off a new background job with claude --bg "<task>", peek at the latest turn with the spacebar, or attach to the full transcript with Enter. Adam Brown at Anthropic announced the research preview the same day, and Simon Willison’s live blog of Code w/ Claude earlier in the month already framed the surface stack — CLI → IDE → Desktop → Cowork — as the official roadmap.

The two ship together because they solve adjacent problems. Agent View is the shell layer for coding sessions. Cowork is the shell layer for workflow sessions (browse, click, fill forms, transact). Same architectural insight, two execution surfaces. Anthropic is not picking one — it’s picking both, and assuming the same harness will swallow both surfaces over the next 12–18 months.

The open stack is decomposing into fundable layers

Now look at what GitHub trending and Hacker News did the same week, because the open community is making exactly the same architectural read — except in pieces, because no single open team can ship the whole vertical at Anthropic’s pace.

The convergence of evidence is striking. Six surfaces independently shipped or trended on the same five layers in a single week:

| Layer | Open-stack instance | Anthropic-shell equivalent |

|---|---|---|

| Multiplexer (CLI) | farion1231/cc-switch — +1,340 stars/24h | Agent View |

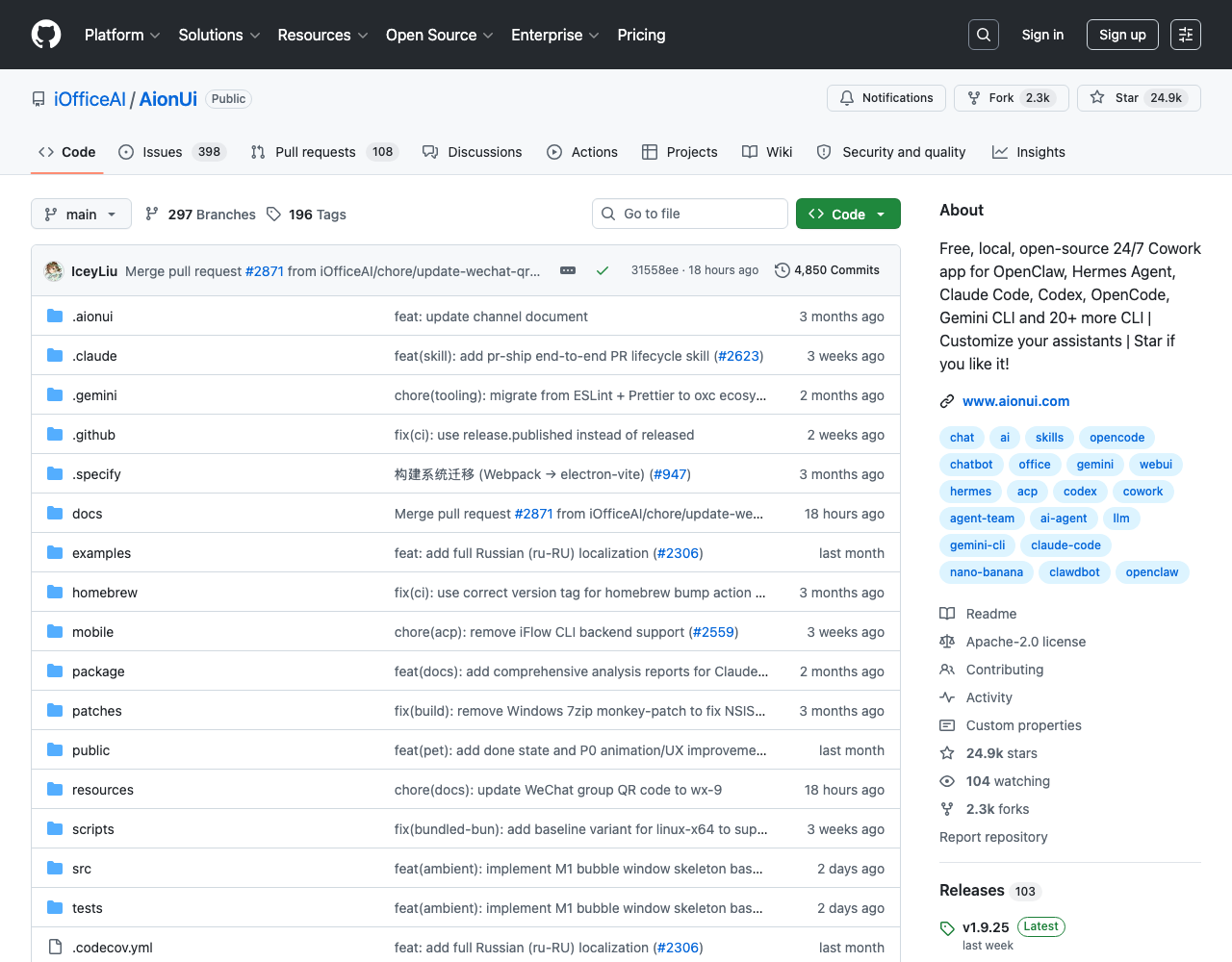

| Multiplexer (Desktop) | iOfficeAI/AionUi — +347 stars/24h | Cowork desktop client |

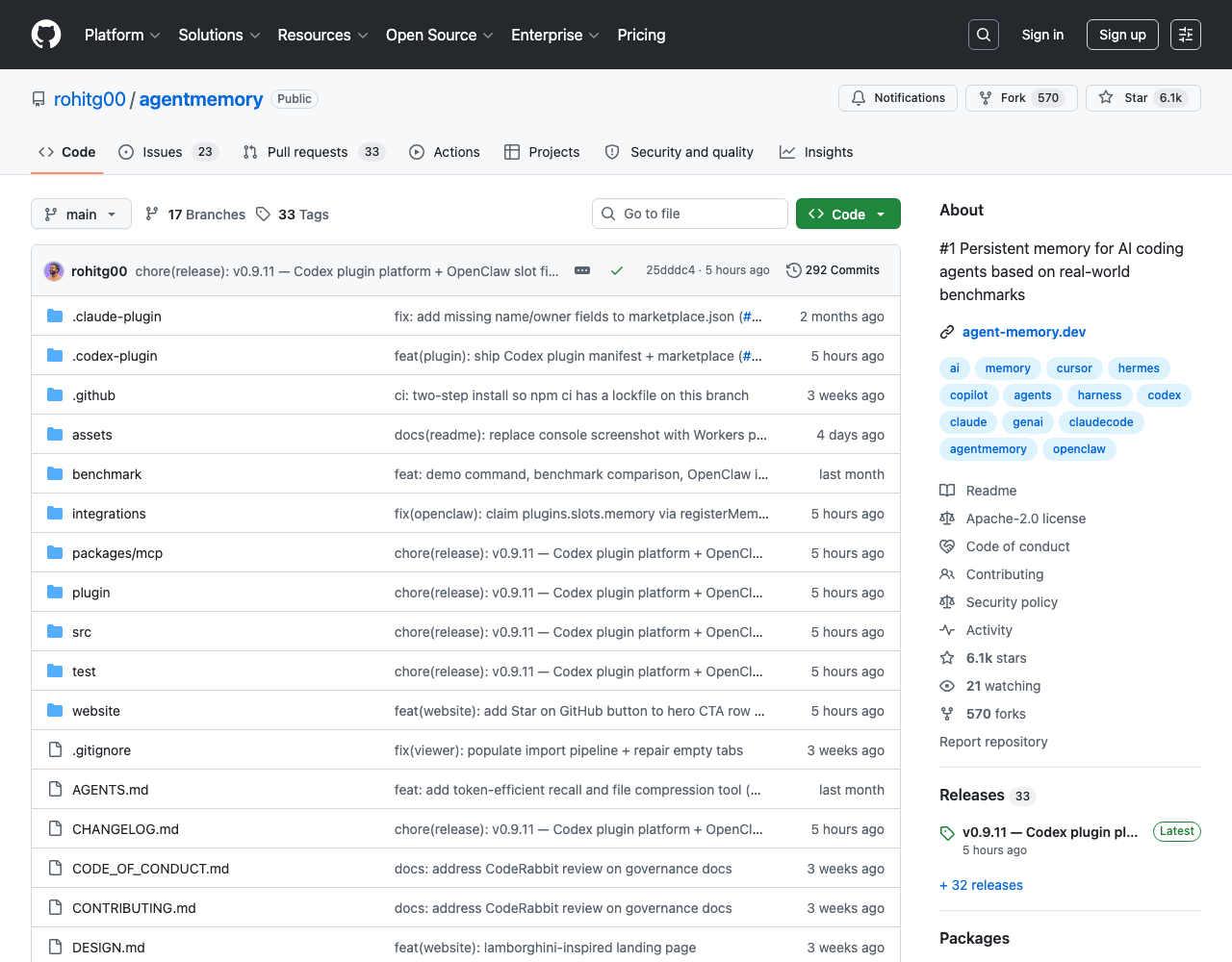

| Persistent memory | rohitg00/agentmemory — +1,067 stars/24h, ICLR-benchmark-backed | Cowork’s session continuity |

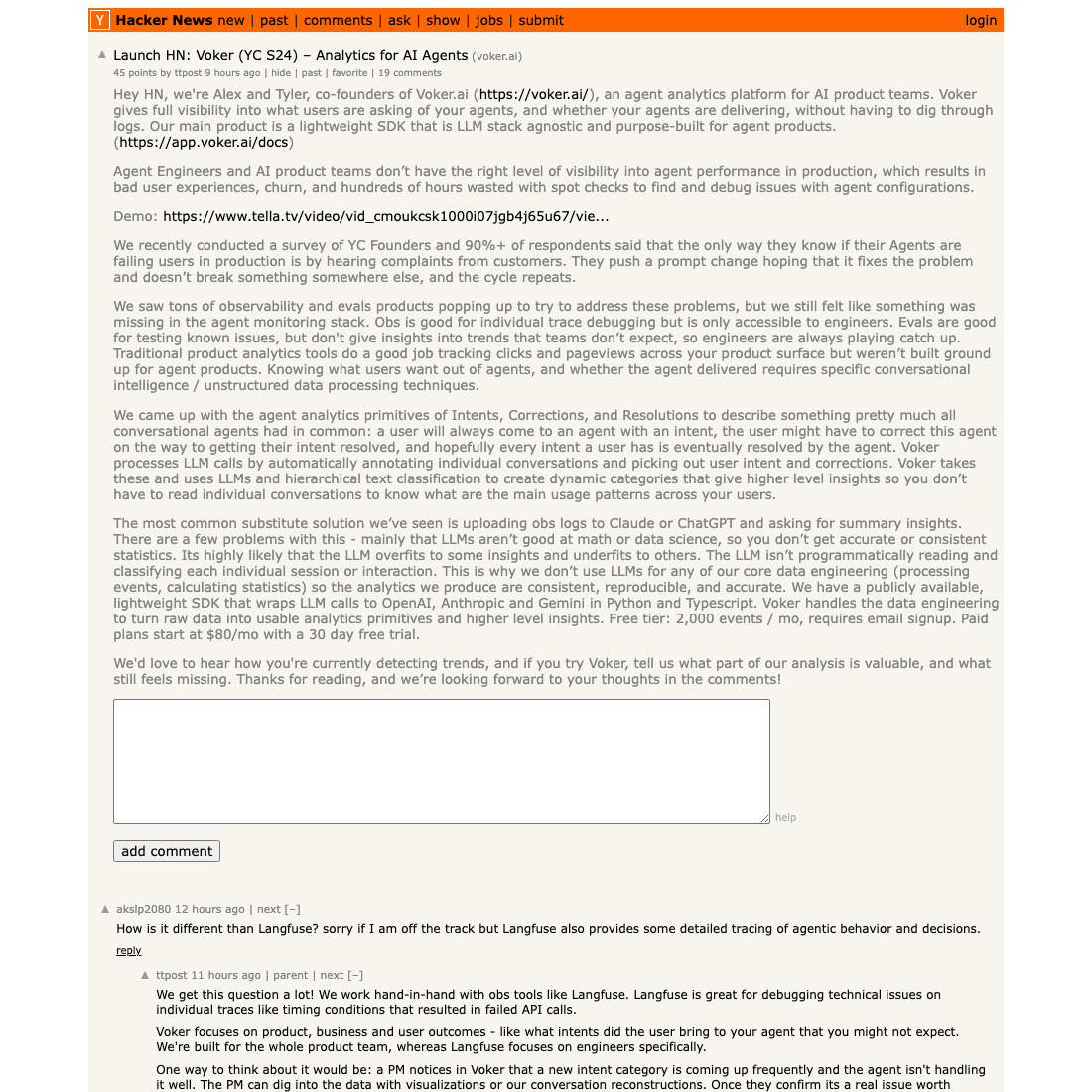

| Observability | Voker (YC S24) — Launch HN this week | Cowork’s internal telemetry |

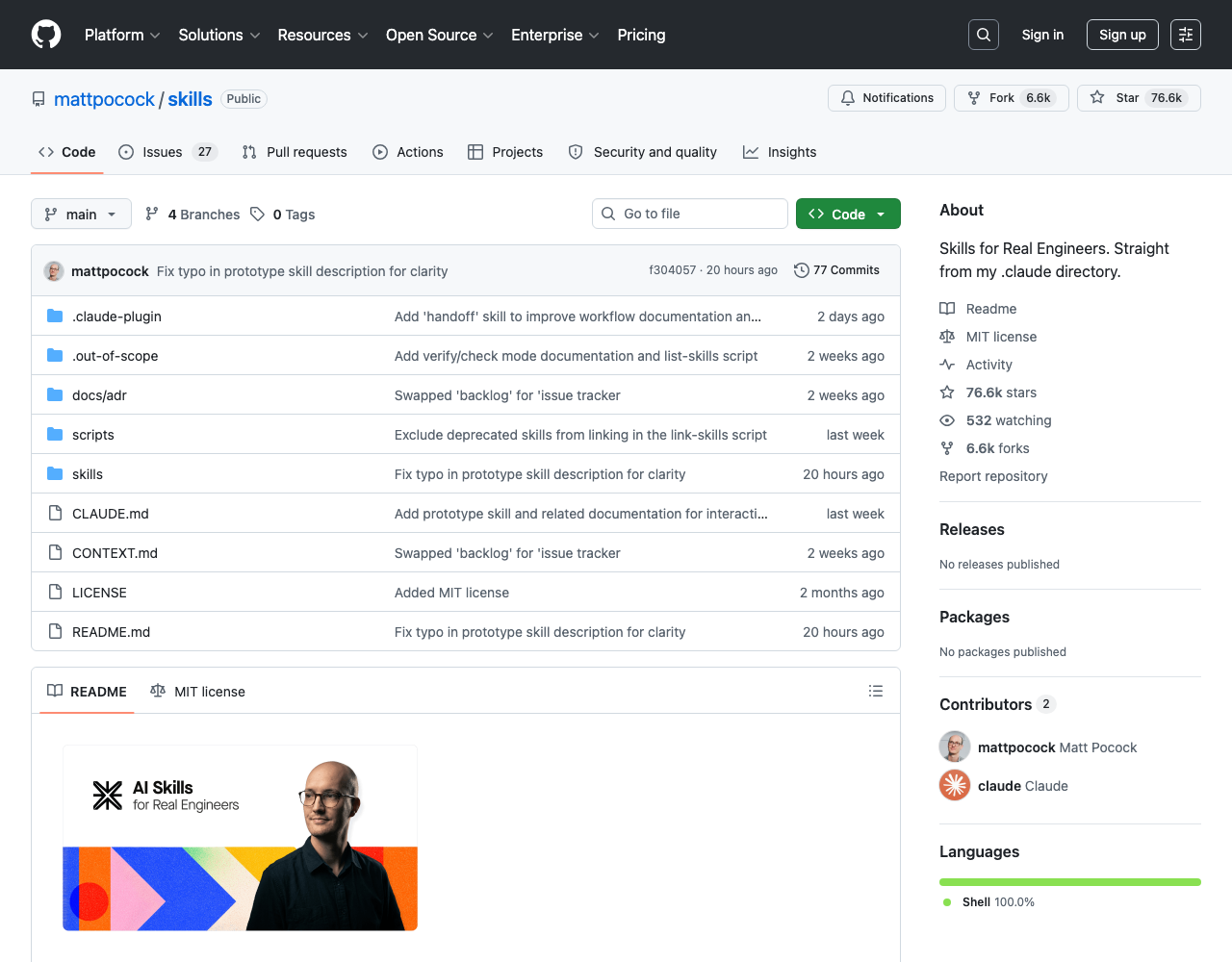

| Skill marketplace | mattpocock/skills — GitHub trending #1, +3,886 stars/24h | Anthropic’s skills surface |

| Engine peer | NousResearch/hermes-agent — +2,439 stars/24h | Claude Code |

| Output validator | millionco/react-doctor | (Anthropic has nothing here yet) |

Two things jump out from this table.

The first is that the open stack already has every layer Cowork is bundling, plus one Cowork doesn’t have yet (output validators — react-doctor and its growing peer set). The community is not behind. It’s ahead in some places (validators), at parity in others (memory, multiplexers), and trailing in exactly one place: the integrated experience of having all five layers ship as a single product with one billing relationship.

The second is the structurally telling detail in cc-switch’s positioning. The cc-switch README explicitly lists OpenClaw as one of the assemblable CLIs — alongside Claude Code, Codex, OpenCode, Gemini CLI, and Hermes Agent. It does not treat OpenClaw as the aggregator. That is the strategic question OpenClaw and every other engine-layer player has to answer this quarter: defend the engine-layer position (be the default substrate that the shell wraps), or contest the shell layer (build the aggregator yourself and absorb the engine-as-substrate framing). Anthropic has visibly chosen “both,” and the rest of the engine-layer field hasn’t yet picked.

The 2014 container-infra playbook, running again

The strongest signal that this is not just a feature week is how cleanly it maps onto the 2014–2017 container-infrastructure cycle. The pattern then went like this:

- A novel runtime primitive ships — Docker (2013–14). The community generates dozens of complementary tools — Compose, Swarm, Mesos, Marathon, Rancher, Tutum, CoreOS Fleet, all the schedulers and registries.

- Within 24 months, the platform-of-platforms wins — Kubernetes (2014, productized 2015–17). The engine-layer becomes commodity (any container runtime works, from Docker to containerd to CRI-O). The shell-layer (k8s) eats the value.

- The independent tools don’t disappear — they get acquired or absorbed (Mesos joined CNCF, Tutum became Docker Cloud, Fleet was deprecated, Rancher persisted by becoming a k8s management plane on top of k8s).

The current cycle is the same shape, played at AI speed. The novel primitive is the agent runtime (Claude Code, Codex, OpenClaw, Hermes Agent — the equivalent of “container engine”). The complementary tools are the layers in the table above (memory, observability, validators, multiplexers, marketplaces — the equivalent of Compose/Mesos/etc.). The platform-of-platforms competition is happening right now, in May 2026, and Cowork + Agent View are Anthropic’s bid to be Kubernetes.

The AI Engineer Europe talk on Pi/OpenClaw is the inverse posture — Matthias Luebken’s framing of “embedding the OpenClaw coding agent in your product” assumes the engine is the ingredient, not the meal. That’s the engine-as-commodity bet, the structural counter to what Cowork is doing. Both bets cannot be right. The next 12 months will resolve which one is.

The historical detail worth pulling forward: in 2014 the correct call was to assume k8s would win and to build on top of it. The teams that bet on the open shell winning (Mesos, Swarm, Nomad) had defensible technical positions but lost the platform fight on distribution. The same is plausible here for any team trying to build a Cowork-equivalent without Anthropic’s billing relationship and model-quality moat. We’ve covered some of these dynamics directly — see our deep dive on the cc-switch CLI multiplexer race, the skills directory race with mattpocock and Composio, and the broader skills-directory comparison with codex/pi-mono for how the marketplace layer is fragmenting.

Decision matrix: when to bet on Anthropic’s shell vs assemble your own

Here’s the practical question. If you are building agent-driven product or workflow infrastructure right now — not playing with Claude Code on a side project, but staking a roadmap — which side of this bet do you take?

There is no universal answer, but there is a per-substrate-layer answer.

| Layer | Bet on Anthropic’s shell when… | Bet on the open stack when… |

|---|---|---|

| Multiplexer | Your team uses Claude Code primarily, you want zero integration cost, and you trust Anthropic’s product velocity | You run multiple engines (Codex, OpenClaw, Hermes Agent, Pi) and need a vendor-neutral cockpit — pick cc-switch or AionUi |

| Memory | You want the integrated “session continuity” UX with zero config | You need benchmark-backed retrieval, multi-engine support, or audit-grade memory provenance — agentmemory ships LongMemEval-S 95.2% and /integrations/openclaw |

| Observability | Anthropic exposes session-level traces in your billing dashboard (still ships sparse) | You need real-time alerting, custom metrics, or PM/analyst-readable behavior dashboards — Voker (YC S24) is the cleanest pitch |

| Output validators | Wait — Anthropic does not ship one yet | Ship today. react-doctor for React, equivalents emerging for svelte/vue/django within 60 days |

| Skill marketplace | You’re inside Claude Code’s first-party path | You want curator-trust as your filter — mattpocock/skills, addyosmani/agent-skills, or VoltAgent/awesome-agent-skills (1,000+ skills, cross-harness) |

| Engine | Pure Anthropic shop, latency-tolerant, premium-tier billing | You need cost-arbitrage (DeepSeek V4), open-weights (Hermes Agent), or air-gapped deployment |

The honest read: most engineering teams should run a hybrid stack for the next 12 months. Use Claude Code + Cowork as the default workflow for human-in-the-loop coding tasks (because the engine quality is real and the integrated UX is polished), but instrument every production agent surface with an open-stack observability tool (Voker, Langfuse, or a custom OpenTelemetry exporter), and pin output validators on every agent-emitted artifact (react-doctor or whatever lands for your stack). The reason: vendor lock-in on the shell layer is the same vendor lock-in you spent 2018–2020 escaping in the cloud-infra cycle, and you do not want to learn the lesson twice.

For more on the validator wave specifically — including agentmemory’s benchmark-backed pitch and react-doctor’s “your agent writes bad React” framing — see our skill spam validators deep dive. For the memory layer specifically, our agent memory and dream context-files explainer has the detailed comparison. And for the broader question of which substrate layer to bet on, the Cursor SDK vs Browserbase vs OpenAI Apps SDK harness substrates piece is the canonical comparison.

The community signal: where the consensus is forming

The cleanest community framing this week was Boris Cherny’s. The reason his flight-booking tweet matters more than any of Anthropic’s marketing is that it’s dogfood evidence from the team that builds the product. When the person who ships Claude Code is using Cowork to book his own travel and is surprised it worked, the surprise is the data. The same week, Adam Brown’s Agent View announcement and Simon Willison’s Code w/ Claude live-blog carry the secondary signal: insiders and influential observers are converging on the same surface-stack framing. There is no obvious dissent yet from the open-stack camp — cc-switch and AionUi and mattpocock/skills are racing to ship, not pushing back on the architecture. They’ve accepted Anthropic’s read of the stack and are competing on execution.

The first counter-narrative to watch is what Nous Research does with Hermes Agent. With +2,439 stars in 24 hours and a v0.13.0 release shipped May 7, Hermes Agent is the strongest open-weights engine peer to Claude Code right now — and its stated thesis (“the agent that grows with you”) is fundamentally about the engine doing more, not about the shell wrapping more. If Hermes Agent’s user base actually compounds — and the growth curve says it might — the structural alternative is “smart engine + thin shell,” not “thin engine + smart shell.” That’s the bet that makes Cowork irrelevant if it lands.

What to watch over the next 30 days

Three signals will resolve the direction:

-

Anthropic’s next pricing move. If Cowork stays in research preview at no incremental cost through June, that’s a sign Anthropic plans to swallow the shell into the existing Pro/Max/Team plan and use it as a wedge against Codex. If a separate Cowork SKU appears, that’s a sign Anthropic is monetizing the shell layer directly — which means the open stack has real economic room to compete.

-

OpenClaw’s positioning response. As of this week, OpenClaw is being treated as one of the assemblable CLIs, not as the aggregator. The OpenClaw team has a 30–60 day window to decide whether to defend the engine-layer position or contest the shell. If they ship a Cowork-equivalent, the shell-layer race becomes three-way (Anthropic + OpenClaw + open stack). If they don’t, they bet on commodity engine + dominant developer mindshare — the Linux play, structurally.

-

First validator-wave consolidation.

react-doctoris alone today. Within 60 days there will be doctor-equivalents for svelte, vue, django, and the language-equivalent test will land for Python, Go, and Rust. Watch for whether one of them is acquired by an observability vendor (Voker, Langfuse, Datadog) — that consolidation is the structural moment when the open stack gets its own integrated shell, parallel to Cowork. It’s the equivalent of when Datadog acquired everything in the APM space in 2017–2019 and the open-source telemetry stack collapsed into “send to Datadog.”

The 2014 cycle took three years to resolve. AI cycles run roughly 4× faster, so call it nine months. By February 2027 we’ll know whether Cowork won, whether the open stack consolidated into a credible alternative, or whether the surface fragmented and the market split between Anthropic-shop teams and everyone-else teams. The bet you make this quarter on which side of that split you’re on matters more than the engine you pick.

The flight-booking 1-shot was the easy part. The shell-layer race is the hard part, and it’s already started.

Source receipts: bcherny on X, Anthropic Cowork, Claude Code Agent View coverage, Felix Rieseberg on Latent Space, Simon Willison’s Code w/ Claude live blog, mattpocock/skills, rohitg00/agentmemory, farion1231/cc-switch, iOfficeAI/AionUi, NousResearch/hermes-agent, Voker Launch HN, millionco/react-doctor, AI Engineer Europe Pi talk.