Skill Spam Is a Genre — And the Validators Are Trending

Two-day-old skill ecosystem already spawned validators: react-doctor, agentmemory's LongMemEval benchmarks, and Osmani's curation outpacing first-party.

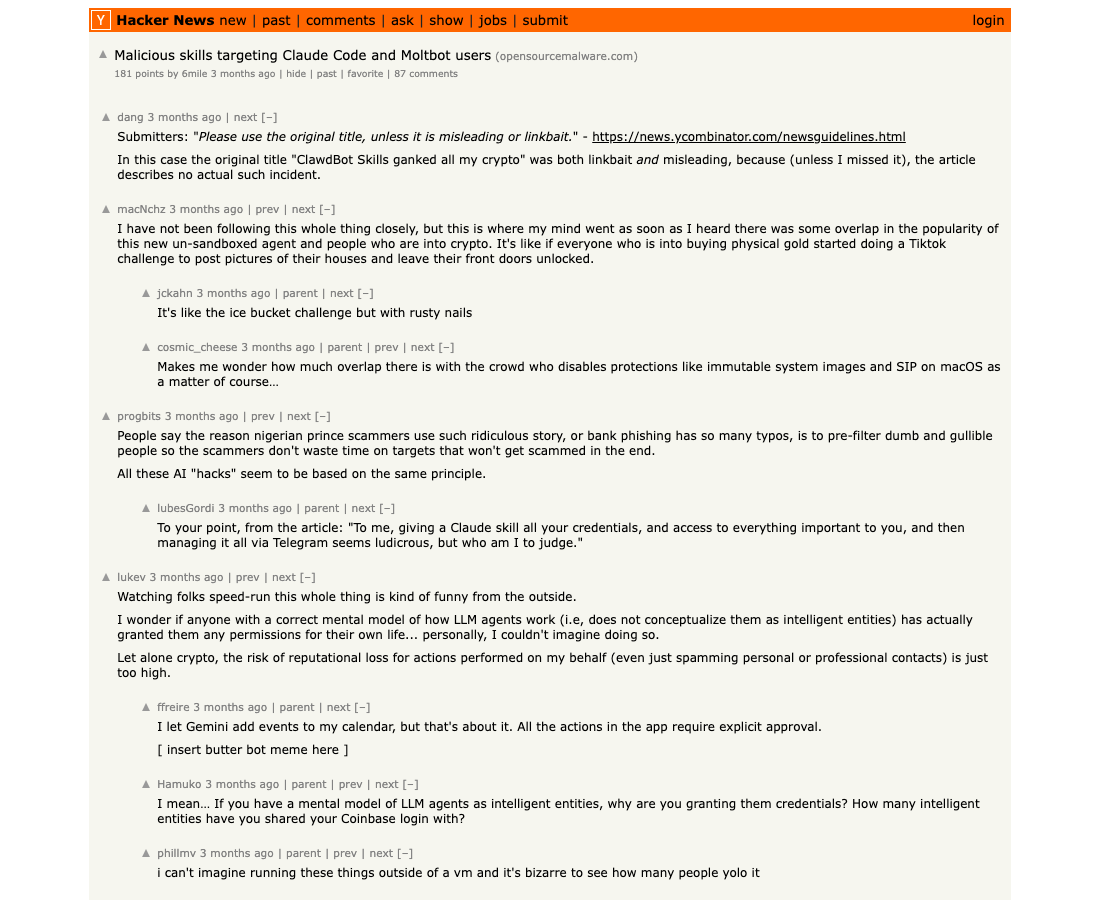

Somebody on Hacker News this week did the AI ecosystem a favor: they named the genre. The thread was about an “Academic Research Skills for Claude Code” pack on GitHub, and the highest-voted comment — exasperated, exact — called it “skill spam.” The phrase stuck inside 24 hours. By Saturday morning, three independent surfaces (HN, GitHub-trending velocity, validator-tool emergence) had aligned on the same complaint: there are now so many skill packs that the next product category is the thing that decides which of them are real.

The relevant detail — the thing that puts you on notice if you’re shipping a skill pack — is the timeline. Anthropic introduced Skills as a Claude Code surface less than three months ago. Within that window, the genre has matured from “skill packs” → “skill marketplaces” → “skill validators” → “benchmark-backed skill primitives” → “skill spam critique on HN front page.” Five generations in a quarter. Two-day-old genres do not usually spawn fix-up tools, but this one did, and the fix-up tools are themselves trending on GitHub.

This is the validator wave. Three buckets are forming. We’ll walk each.

1. Output validators — react-doctor and the agent-writes-bad-code thesis

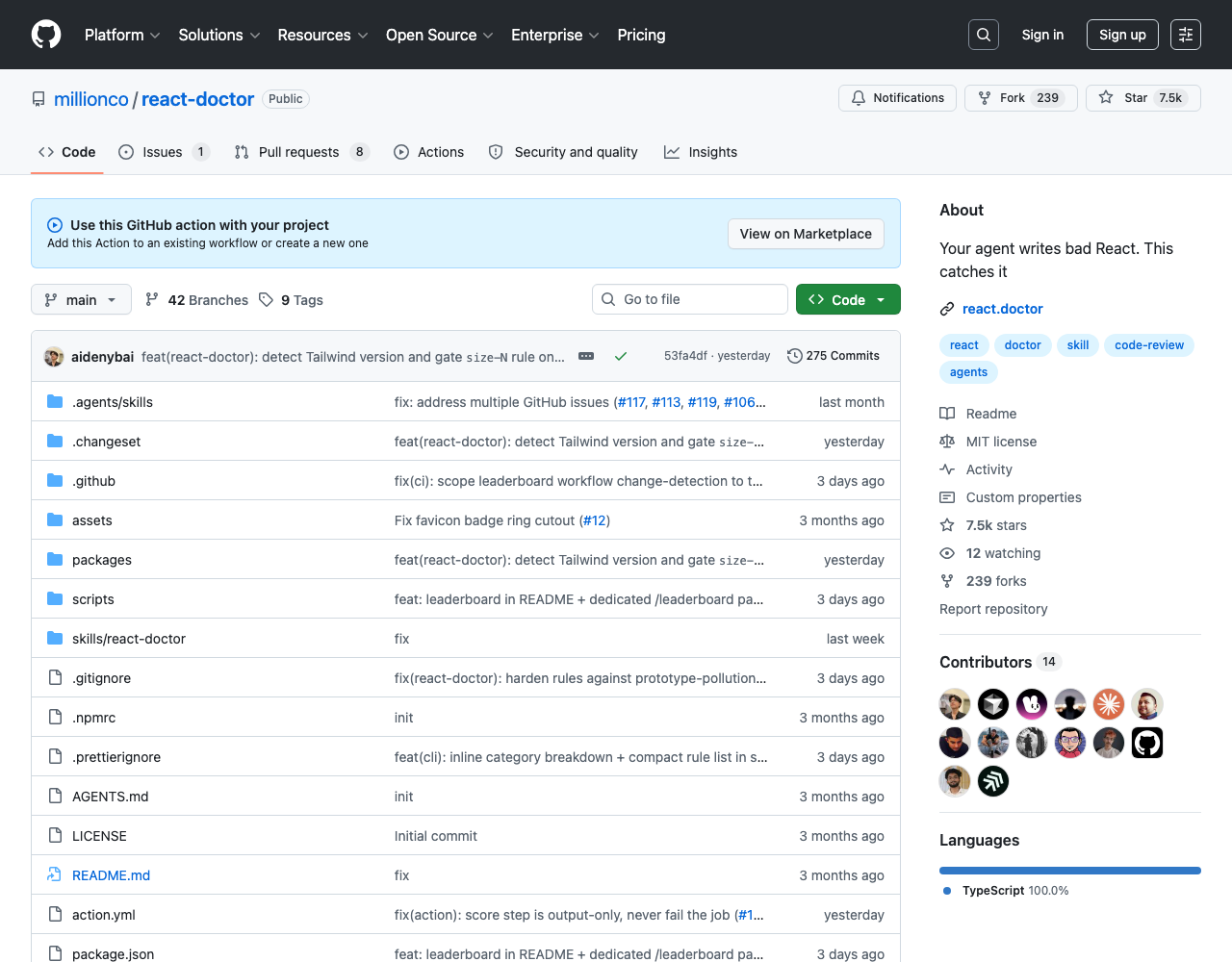

The most pointed entrant is millionco/react-doctor, whose README tagline is “Your agent writes bad React. This catches it.” Note the sentence structure — it’s not “your code has bugs” or “you write bad React.” It’s your agent. The whole framing assumes the code under review was authored by a Claude Code or Cursor session, and the human’s job is to triage AI output.

The tool ships as a composite GitHub Action you drop into .github/workflows/. It outputs a 0–100 quality score and supports fail-on thresholds, offline mode, and PR-comment integration via github-token. Critically, react-doctor works across Claude Code, Cursor, Codex, OpenCode, and 50+ other agents — the validator doesn’t care which harness emitted the bad React. That’s the architectural tell: validators are leveling out across harnesses while skills are still being authored per harness. The validator layer is going horizontal.

The same team’s millionco/claude-doctor — sibling project, same naming convention — diagnoses Claude Code sessions themselves. Not the output, the session. Different validation target, same insight: the output of an agent is now a thing that needs medical attention.

What this means practically: if you’re shipping a skill pack, the new bar is that you can demonstrate the output your skill produces survives a third-party validator. The skill itself isn’t the deliverable anymore. The output trace is. That’s a structural change in how skill quality gets adjudicated — and it’s also why the security framing matters. The Malicious Skills Targeting Claude Code HN thread from earlier in the year was about installation-time threats; react-doctor’s framing is about runtime threats. Both layers need validators that are independent of the skill author.

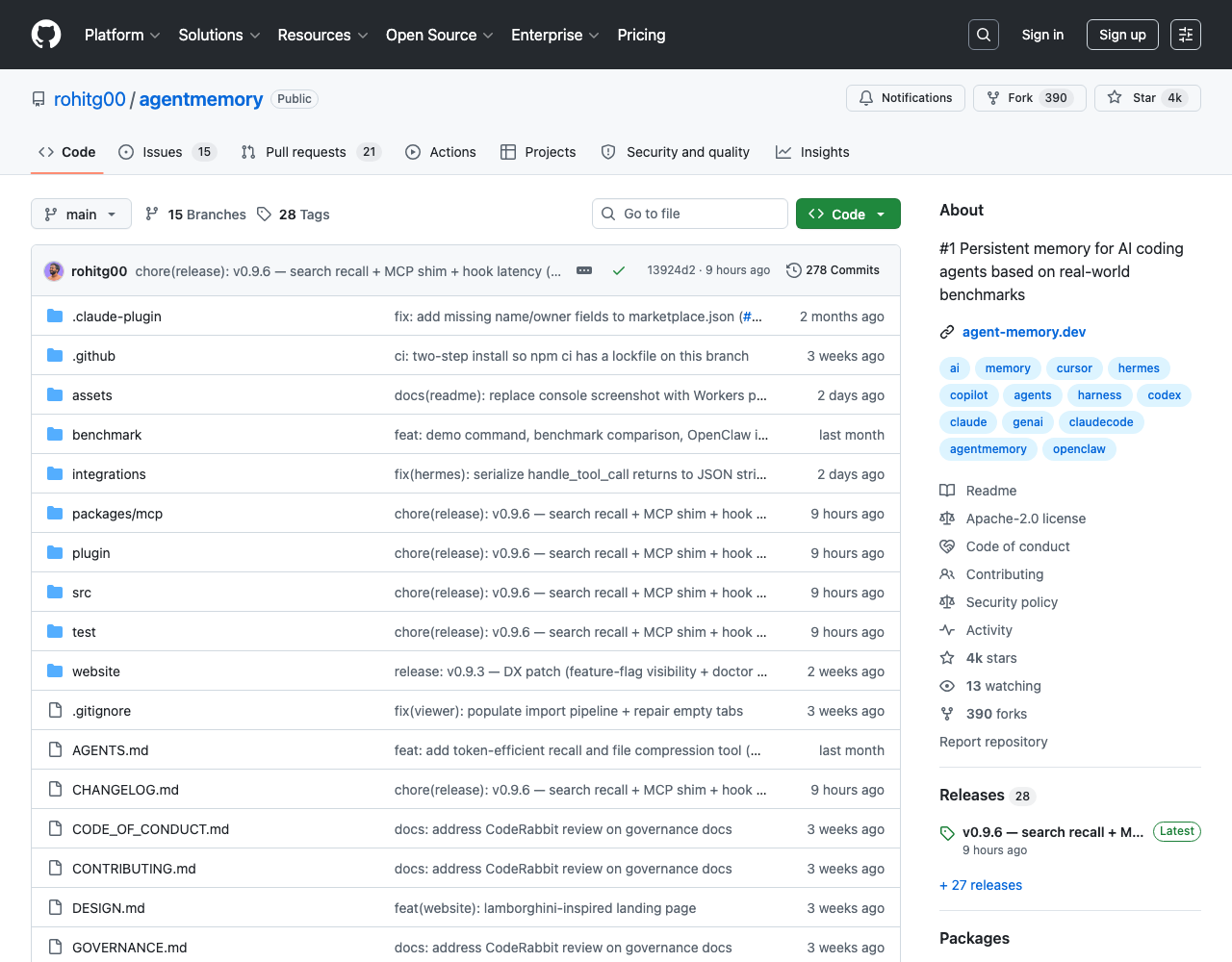

2. Benchmark-backed primitives — agentmemory’s LongMemEval claim

The second bucket is more interesting because it solves the prior problem: how do you know a skill actually works before you install it? rohitg00/agentmemory was the cleanest pitch in this week’s GitHub-trending crop. Tag-line: “#1 Persistent memory for AI coding agents based on real-world benchmarks.” The “based on real-world benchmarks” clause is doing most of the work. Most skill READMEs say “it’s good” — agentmemory ships the LongMemEval (ICLR 2025) result table in-repo:

| Retrieval strategy | LongMemEval-S accuracy | Notes |

|---|---|---|

| BM25 alone | 86.2% | Lexical baseline |

| BM25 + Vector hybrid | 95.2% | Production default |

| Pure vector | 96.6% | +1.4pp gap; higher token cost |

The pitch isn’t “we have memory” — it’s we have memory that scored 95.2% on an academic benchmark. Per the README, this comes with a claim of 92% fewer tokens per session versus full-context pasting and 12 auto-capture hooks (zero memory.add() calls). Whatever you think of the specific numbers, the framing — claim + benchmark + integration — is the new contract. Compare it to the skill packs that ship with neither numbers nor evals: those are spam, definitionally, under this contract.

The wider implication: ICLR-tier academic benchmarks (LongMemEval is from the ICLR 2025 long-term-memory track) are now production-quality moats for skill primitives. The closest analogue is what happened to vector databases circa 2024 — the moment ANN-Benchmark scores became table-stakes, “vector DB but trust me” stopped being a viable pitch. Skills are crossing that threshold this quarter.

The agentmemory repo also ships a dedicated /integrations/openclaw folder — i.e., it’s not Claude-only. That matters because validator/benchmark primitives are explicitly cross-harness; skill packs increasingly are not. A skill pack that ships only an Anthropic version is now a category narrower than the primitive it depends on.

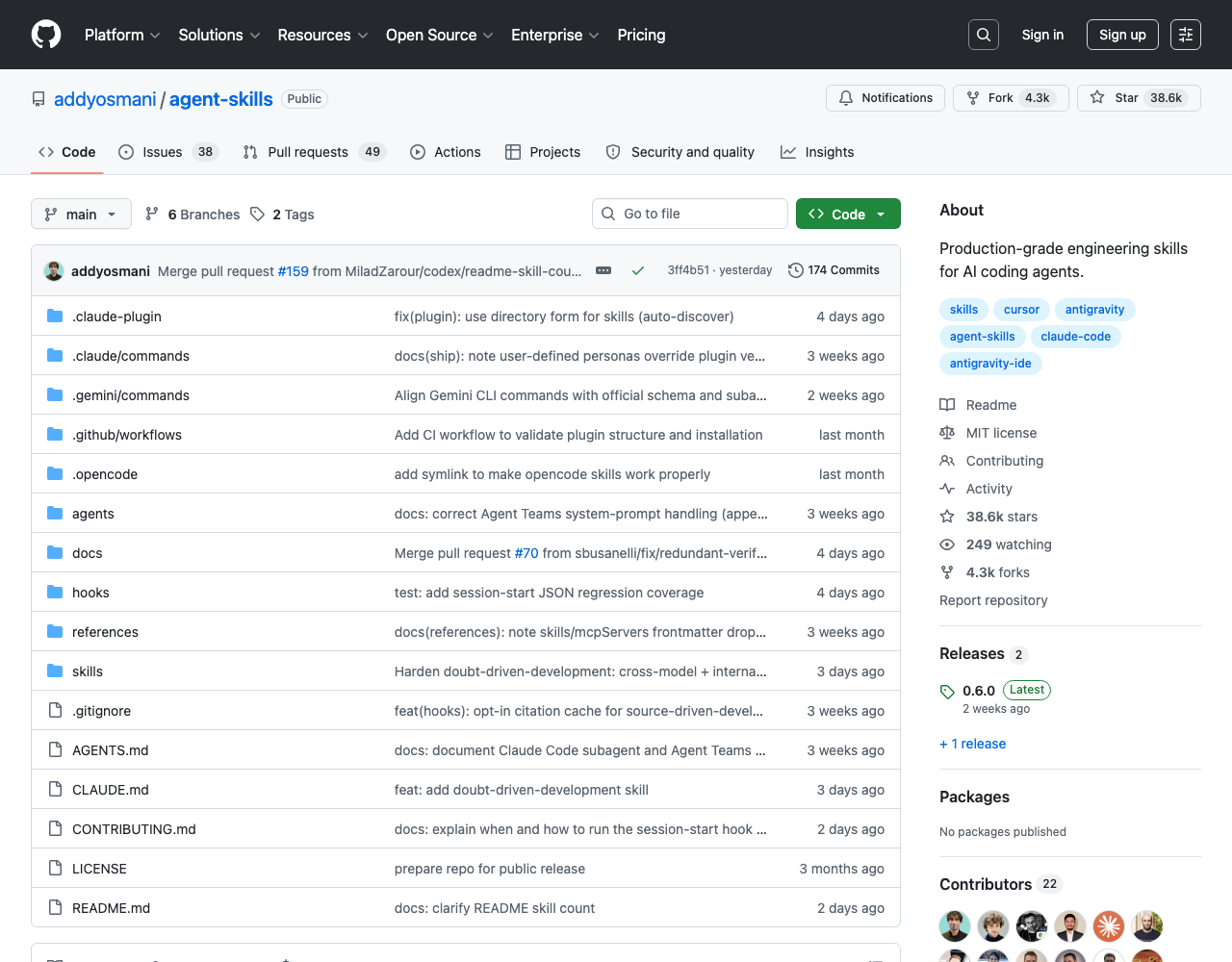

3. Curation-as-validation — Osmani’s pack outpacing the first-party

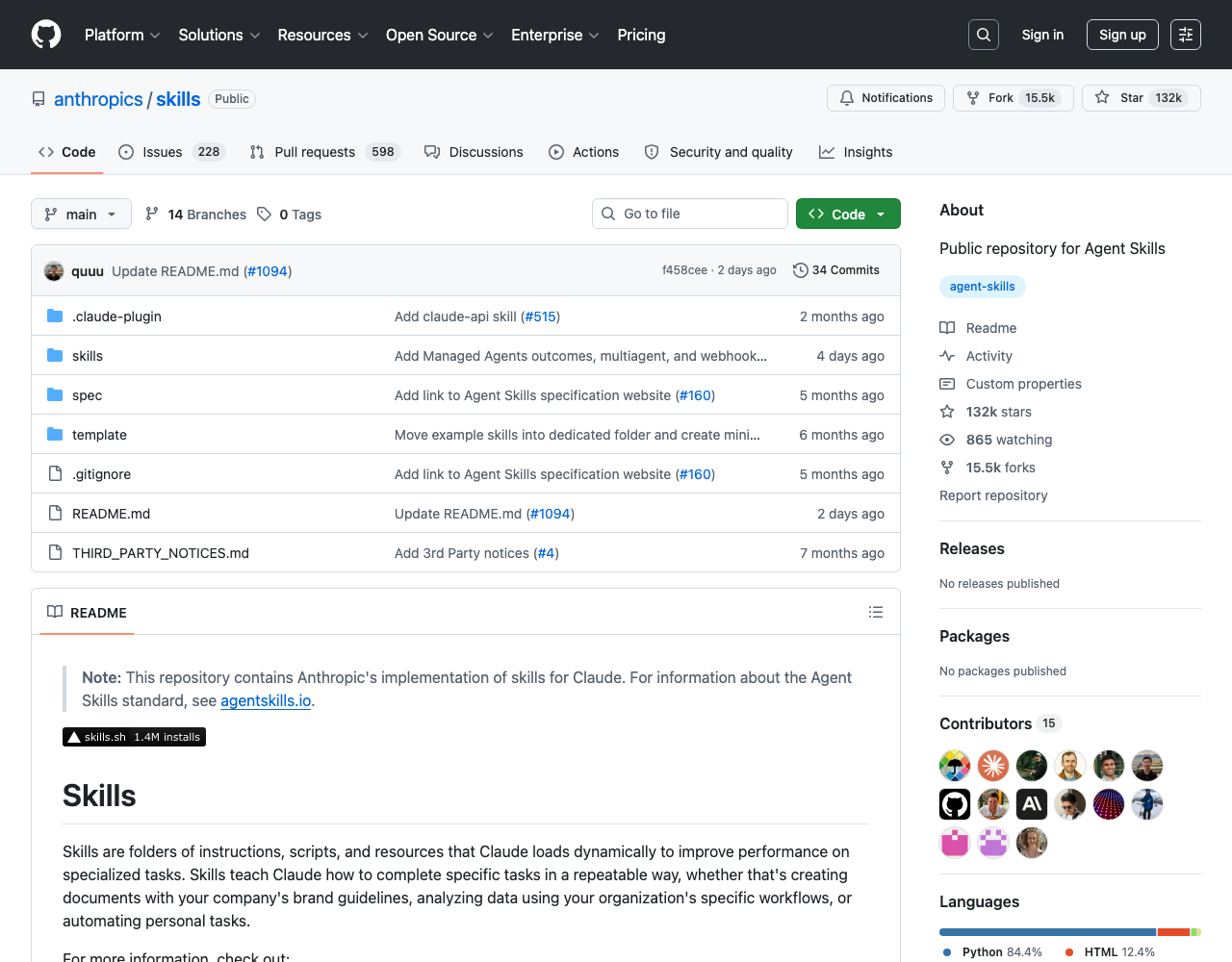

The third bucket is the subtlest. addyosmani/agent-skills — 22 skills total, 21 lifecycle + 1 meta-skill — sits at 37.1k stars and is gaining at +1,092/day per Trendshift, which puts it in the top 5 trending repos worldwide this week. Anthropic’s own anthropics/skills is at 131k stars but trending at “only” +502/day, despite being the canonical first-party repo for the surface.

Osmani’s pitch on his own blog is the production-grade thesis — encoding professional workflows, quality gates, and industry best practices directly into the operational logic of AI agents. The content is good, but the velocity story is what matters: community curation by a known-good editor is outpacing first-party docs as the trust signal. That’s curation-as-validation. The skill pack you install is the one whose curator you already trusted before skills existed.

The deeper read: when the trust signal is who curated this set, the skill ecosystem stops looking like NPM and starts looking like awesome-* lists circa 2015 — except awesome-* lists were never the production layer, and this one is. VoltAgent’s awesome-agent-skills collection (1,000+ skills, cross-harness — Claude Code, Codex, Gemini CLI, Cursor) is the meta-curation layer above Osmani’s. Two tiers of curation. The reader’s job is now to pick a curator, not a skill. That’s the same shift the JavaScript ecosystem went through in 2017–2019 when “which framework” became “which framework’s community do you trust.”

We’ve covered the curator-race directly on AgentConn before — see our mattpocock vs Composio skills directory race and the broader skills-directory race with codex/pi-mono comparisons. What this week adds is the second derivative: validators of the curators. react-doctor is, in this framing, a validator on Osmani — not on a specific React skill, but on the assumption that any of these skills produces good React. That’s a meta-layer most skill ecosystems don’t reach for years.

4. The HN signal — “skill spam” names the genre

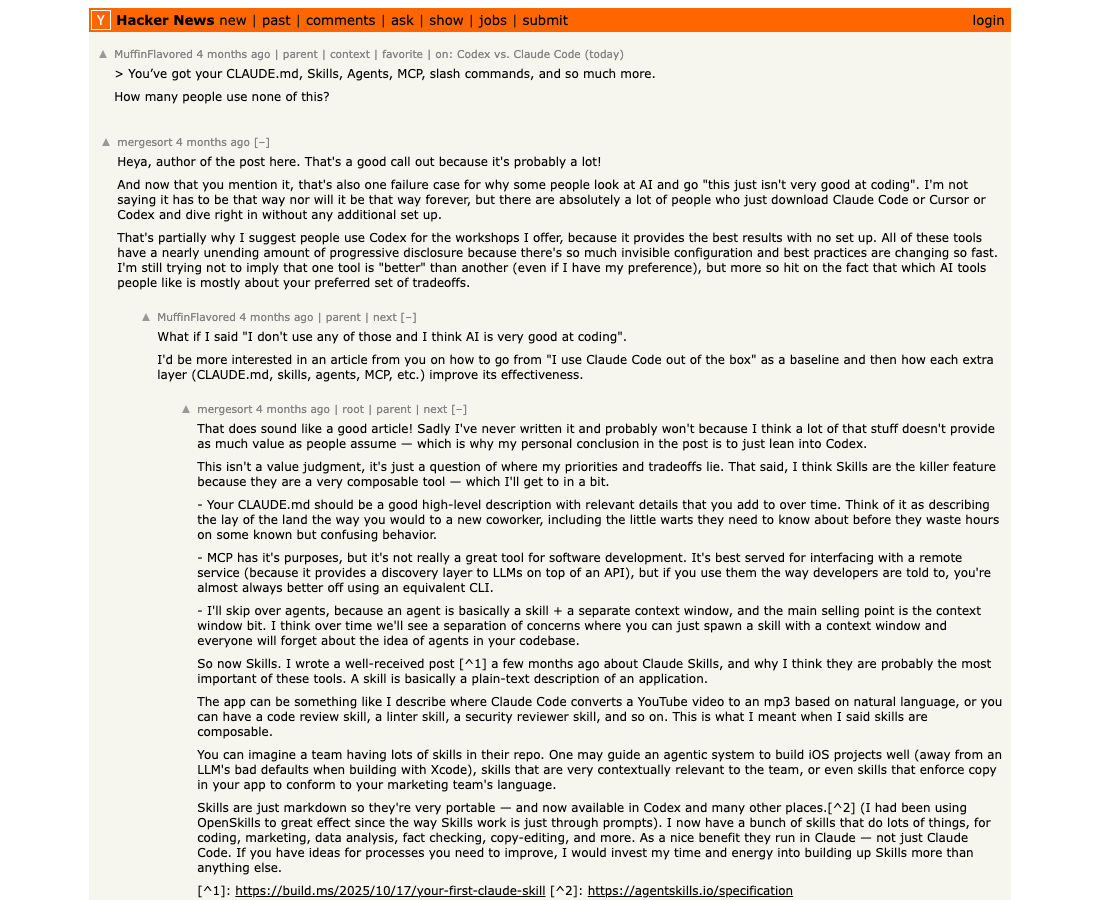

The most useful thing the HN thread did was name what people were complaining about. Once you have the word skill spam, you can talk about it. The earlier HN discussion — “You’ve got your CLAUDE.md, Skills, Agents, MCP, slash commands, and so much more…” — captured the same anxiety from a different angle: the count of abstractions is rising faster than the docs.

What’s notable is that the genre name landed before the validator wave was fully in place. That’s the normal shape — naming the failure mode (spam) creates demand for the fix (validators), and the market catches up. Right now we’re somewhere between week one and week two of that arc. By next month, “skill spam” will be a category label on directory sites, not a complaint.

How to ship a skill that survives the validator wave

Working backwards from the three buckets, here’s the practical takeaway for anyone shipping a skill pack right now:

- Ship a benchmark, not a claim. The agentmemory bar — paste the LongMemEval table into your README — is the new floor. If your skill claims it “improves code review” or “produces better test coverage,” the next-most-rational reader will ask: vs what, on what dataset, at what cost? If you can’t answer in numbers, you are spam by the agentmemory definition.

- Make output survivable by an external validator. Run your skill’s output through react-doctor (or the language-equivalent that will exist within 30 days). If your skill produces React, get a score. Add it to the README. The score doesn’t need to be perfect — it needs to be measured.

- Pick your curator early. If a known curator (Osmani, mattpocock, VoltAgent, etc.) won’t include your skill in their pack, no marketplace surface will save you. The curator layer is the new gating function. Submit a PR to one of the curation repos before you launch standalone.

- Cross-harness or die. Single-harness skills are now structurally narrower than the validator layer they depend on. agentmemory’s

/integrations/openclawdirectory is the model — ship for one harness, but build the abstraction so the second is a folder away. - Treat security as a skill primitive. The malicious-skills HN thread means every skill needs a security posture: signed manifests, sandboxed I/O, reviewable side-effects. The skills that survive will be the ones that ship a

THREAT-MODEL.mdalongside the README.

The biggest mistake right now is shipping a skill that’s just a clever prompt with no validator, no benchmark, and no integration story. There is a window — measured in weeks, not months — where that posture still gets stars. After that window closes, the directory sites will start applying the same filters by default, and your repo will be in the “skill spam” bucket regardless of what the prompt actually says.

The validator wave is the friendly reading of all this. The unfriendly reading is that most of what’s been shipped in the last 60 days won’t survive the filter. That’s fine — that’s how every infrastructure wave works. NPM had spam too. The interesting question isn’t whether the cleanup is coming; it’s which validator wins. Right now, react-doctor + agentmemory + Osmani’s pack are the three credible bets. Watch their star velocities. The ratio of validator-star-growth to skill-pack-star-growth is the real signal for where the ecosystem is heading next.

For broader context on how this ecosystem is monetizing, our prior coverage on Cursor’s skills-as-runtime — 12k LOC to 200 and Dexter vs Anthropic’s finance skills as an open-source buyer’s guide are the natural follow-ups. The shape they share — buyer’s-guide framing, benchmark-or-no-benchmark filter, curator-trust layer — is the shape the entire skill ecosystem is converging on.

Validators won. The only question is which.