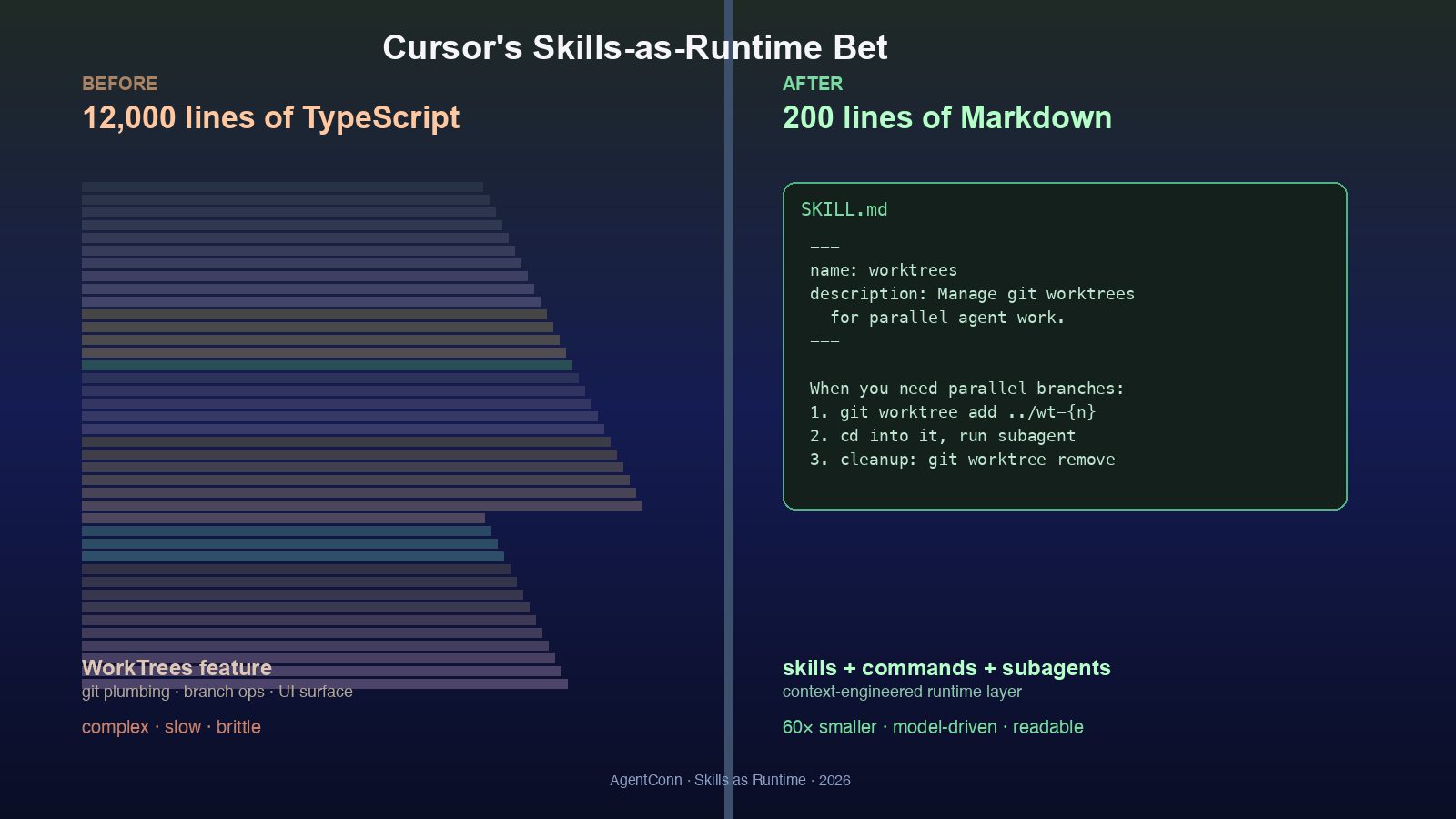

Cursor: 12,000 Lines of TypeScript → 200 Lines of Skill

David Gomes' AI Engineer talk turned Cursor's WorkTrees rewrite into the first production case study for Skills-as-Runtime. What 200 lines actually replaced.

Yesterday at the AI Engineer summit, David Gomes from Cursor gave a 24-minute talk titled, with the kind of bluntness that only ships when the rewrite actually worked, “Replacing 12K LoC with a 200 LoC Skill.” The full session is on the AI Engineer YouTube channel. It is, as far as we can tell, the first canonical production case study for the thesis that has been animating GitHub trending for the last week: agent skills are not a novelty pattern. They are a production runtime layer, and they are starting to absorb code that used to live in the binary.

The talk is about Cursor’s WorkTrees feature — the parallel-branch UI introduced in Cursor 3 that lets a single agent spin up several branches at once and review the results side-by-side. The original WorkTrees implementation was, per Gomes, roughly twelve thousand lines of TypeScript: branch-management plumbing, a UI surface for switching between trees, lifecycle hooks for cleanup, scheduling logic for parallel agent runs, conflict detection, the whole thing. The replacement is a single SKILL.md of about two hundred lines, plus a handful of slash commands and a couple of subagents that the model invokes when it decides parallel work makes sense.

That’s a 60× reduction in code surface area for the same feature. And the more interesting claim — the one that makes this a category event rather than a Cursor-specific anecdote — is why the rewrite is possible at all. The talk argues that as agent harnesses mature (Claude Code’s skills runtime, Cursor’s own commands+subagents primitives, OpenClaw’s tool-orchestration layer), large amounts of feature logic that used to require imperative TypeScript can now be specified declaratively in markdown that the model itself executes. The runtime is no longer the binary. The runtime is the model + the harness + the skills directory.

This piece is the production-case-study read: what skills-as-runtime actually means at engineering scale, what the Cursor WorkTrees rewrite tells us about which features can be ported and which cannot, and how the current crop of Skills runtimes — mattpocock/skills, obra/superpowers, ComposioHQ/awesome-codex-skills, karpathy-skills — compare in light of what Cursor just demonstrated.

What “Skills as Runtime” Actually Means

The terminology has been shifting fast. Six months ago, most people writing about “skills” meant something like “a markdown file that tells the model how to do a task.” That definition is no longer load-bearing. The current production definition is closer to this:

A skill is a directory containing a

SKILL.mdfile with YAML frontmatter (name,description), plus optional reference files and executable scripts. The harness loads skill metadata into the system prompt at startup. When a task matches the skill’s description, the harness loads the fullSKILL.mdbody into context. When the body references additional files or scripts, the harness loads or executes those on demand.

That’s the production definition Anthropic published in October and updated when the standard went open in December. The three-tier disclosure model — metadata → body → references — is what makes “skills as runtime” actually work at scale: you can have hundreds of skills installed without filling the context window, because the skills only get loaded when the model reaches for them.

The shift the Cursor talk is naming is what happens after this primitive stabilizes. Once skills are a reliable production runtime — meaning the harness reads them, the model executes them, and the user can audit them as plain markdown — the scope of what they can replace expands rapidly. WorkTrees is the case study because it’s a real, shipped, complex feature, not a toy. And it cleanly answers two questions:

- What kinds of features port well to skills? Workflow-shaped features. Multi-step procedures with branching logic, where the steps are themselves model decisions. WorkTrees is “decide whether to parallelize → spawn N branches → run agents → collect results → reconcile.” Each step is a model judgment, not a fixed transform. That’s the ideal skill-shaped problem.

- What kinds don’t? Anything where the binary needs to do something the model can’t observe — kernel-level operations, latency-critical UI rendering, deterministic security boundaries. The 200-line WorkTrees skill still calls into Cursor’s git plumbing as scripts; it just doesn’t re-implement the orchestration in TypeScript any more.

The 60× Compression Is Not Magic

It’s tempting to read “12K → 200 LoC” as a productivity headline. The more useful read is that 60× compression is what you get when you delete the work the runtime can do for you.

What was in the 12,000 lines? Inferring from public Cursor commits and the talk’s high-level walkthrough:

- State machines. Branch lifecycle: created → cloned → assigned to agent → working → ready-for-review → merged → cleaned up. In TypeScript, every state transition is explicit code with error handling. In a skill, the state machine is described, and the model handles the transitions inline by deciding the next step.

- Decision logic. “Should this task get parallelized?” The TypeScript version had heuristics — code complexity scores, file-touch-count thresholds, agent-load balancing. The skill version is one paragraph: if the task naturally splits into independent units, parallelize; otherwise don’t. The model is the heuristic.

- UI orchestration glue. Switching between worktrees in the side panel, reconciling results, threading user input back into the active branch. A lot of this becomes “the agent surfaces choices in chat and the user replies”; no UI code needed.

- Defensive error paths. Worktree cleanup on crash, partial-branch recovery, conflict-detection-then-rollback. Many of these become “the model notices something went wrong and asks.” That removes lines of code at the cost of accepting that some recoveries will be slower or less precise.

The trade — and Gomes is explicit about it — is predictability for compression. You give up the certainty that branch lifecycle X always invokes function Y. You gain the ability to ship a workflow that an engineer can read in five minutes and an end user can modify by editing markdown.

Why This Is the Cycle’s Most-Watched GitHub Lane

The Cursor talk dropped into a GitHub trending board where this shift was already visible. As of today’s GitHub digest, seven of the top fifteen trending repos are agent-skills/agent-harness projects, totaling +18,945 stars in 24 hours:

- warpdotdev/warp — +8,262 stars (day 2 of a sustained surge). The agentic-terminal thesis is now compounding.

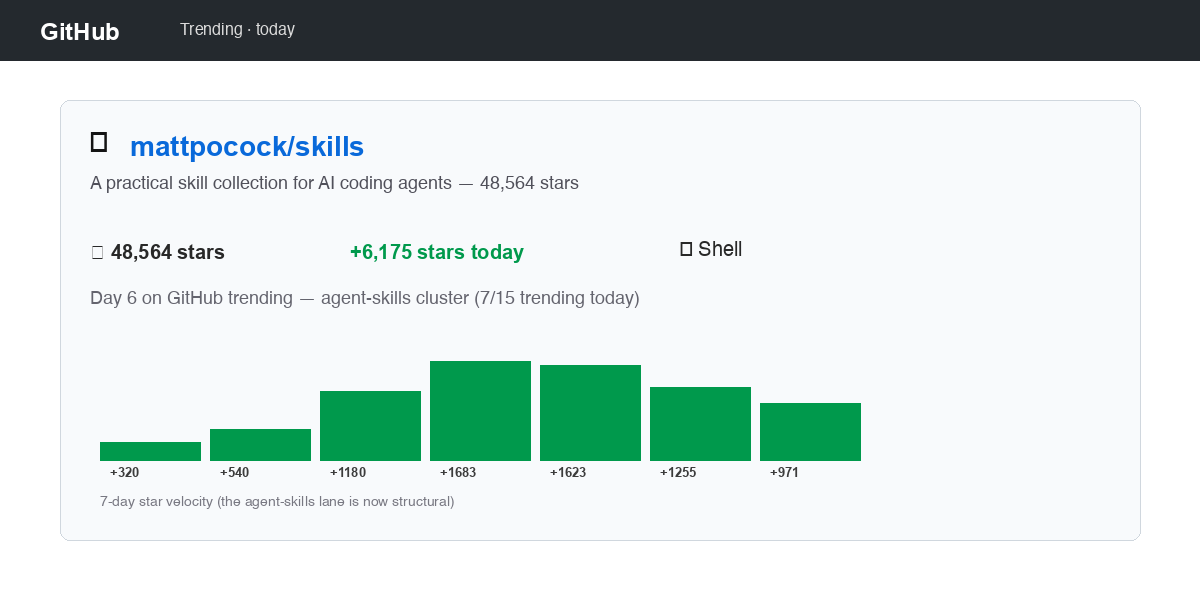

- mattpocock/skills — +6,175 stars (48,564 total). The personal-

.claude/-as-public-asset pattern, Day 6 of trending. - obra/superpowers — +1,623 stars. “Agentic skills framework & software development methodology that works.” 174K total base — the highest-base agent-skills repo on the board.

- farion1231/cc-switch — +971 stars. Cross-platform multi-runtime switcher — the operational layer that makes skills portable across Claude Code, Codex, OpenCode, OpenClaw, and Gemini CLI.

- jpicklyk/jcode — +670 stars. Rust-native coding agent.

- anomalyco/opencode — +654 stars. OSS coding agent (152K total).

- ComposioHQ/awesome-codex-skills — +611 stars. Day-5 trending. The Codex-side directory that pairs with mattpocock/skills on the Claude side.

This is no longer a viral cluster. It’s a category. And the Cursor talk is the first time someone has shown the cost-side proof of the thesis — not “look at how many skills exist” but “look at how much code we deleted because skills exist.”

The Reddit reaction in r/LocalLLaMA captures the mood:

Comparing Today’s Skills Runtimes

Cursor’s WorkTrees skill runs on Cursor’s harness — Cursor 3’s commands and subagents primitives. But the underlying primitive (a markdown file with frontmatter, loaded on demand by the model harness) is portable across every major coding-agent surface in 2026. We covered the skills directory race in detail last week. The Cursor talk reframes the comparison: which runtime is best positioned to absorb production code?

| Runtime | Primary directory | Strength | Best for |

|---|---|---|---|

| Claude Code | mattpocock/skills, obra/superpowers | Most mature SKILL.md spec; three-tier disclosure built in; native scripts execution | Production teams shipping skills as features |

| Cursor | Native .cursor/skills/ + slash commands + subagents | Tight integration with editor surface; subagent spawning is first-class | IDE-driven skills that touch UI |

| Codex | ComposioHQ/awesome-codex-skills, karpathy-skills | Largest Codex-native skill collection; sharp single-purpose primitives | OpenAI-stack teams |

| OpenCode / OpenClaw / cc-switch | Cross-runtime via cc-switch | Same skill works across all four major CLIs | Vendor-independent teams |

| Generic / framework-agnostic | obra/superpowers | Methodology + framework, not just a directory | Teams building their own internal skill discipline |

The portability layer matters here. cc-switch’s value proposition — covered in our cc-switch deep-dive — is precisely that the same SKILL.md can run under Claude Code on Monday and Codex on Tuesday with no changes. Cursor’s WorkTrees skill is technically Cursor-native today, but the Gomes talk explicitly notes that the skill body itself (“describe the parallel-branch workflow as a procedure”) is harness-agnostic; only the slash commands and subagent invocations are Cursor-specific.

This is what cross-runtime skills compatibility looks like in practice today. The runtime layer differentiates on UX, latency, and tool-execution semantics. The skill bodies converge on a portable spec.

The “So What” for Practitioners

If you’re building agent products in 2026, the WorkTrees rewrite has three concrete implications.

1. Audit your imperative orchestration code for skill-shaped opportunities. Anything that’s “model-driven decision branching, then call into deterministic primitives” is a candidate. State machines where the transitions are model judgments. Heuristics where the heuristic is itself a sentence (“if the task is naturally parallel”). UI flows where the model can ask the user instead of re-implementing the choice. Cursor’s 60× ratio is unusually clean — most rewrites won’t compress that hard — but a 5-10× compression on the right surface is realistic and shippable today.

2. Pick a runtime, then write portable skills. The runtime layer is contested (Claude Code vs Cursor vs OpenCode vs Codex), but the skill format itself is converging fast on Anthropic’s open standard. Writing your skills in a portable way — markdown body that doesn’t depend on a specific harness’s invocation syntax, scripts as separate executables — means you can move when the runtime competition shakes out. mattpocock/skills and obra/superpowers are useful reference repos here for what portable skills look like.

3. Measure compression as a first-class engineering metric. “How many lines of code do we delete by moving this to a skill” is the kind of metric that sounds soft until you watch a 60× ratio land in production. Code that doesn’t exist has zero bugs, zero CI cost, and zero migration burden. The Cursor team’s claim — that the rewrite is more maintainable, not less, despite handing more decisions to the model — is the bet that needs validation in your codebase, not theirs.

The Contrarian Read

Worth being explicit: skills-as-runtime is not free, and the bull case is being told much louder than the bear case right now.

The bear case has three legs. First, non-determinism shifts to runtime. A 200-line skill replaces 12,000 lines of TypeScript by trusting the model to make the right call. When the model gets it wrong, you don’t get a stack trace pointing at a specific function — you get a chat transcript. Debugging shifts from reading code to reading model behavior. Teams that don’t have strong eval pipelines are going to discover this the hard way.

Second, the cost curve favors the labs. Every skill execution is tokens. Cursor’s 60× code reduction shifts cost from CPU (TypeScript runtime) to inference (model executing the skill). At today’s prices, that trade is favorable for most workloads. At GPT-5.5-Pro or Opus 4.7 prices, less obviously so — and the labs have demonstrated they’re willing to change pricing on a moment’s notice.

Third, the audit surface gets weirder. Markdown skills are easier to read than TypeScript, but they’re harder to prove things about. A type-checked function has a contract. A skill body has a description and a hope. For features where regulatory or security guarantees matter, the imperative version isn’t going away — and the WorkTrees example carefully avoids security-critical surfaces.

None of this invalidates the thesis. It just sharpens the question: which features in your product are skill-shaped, and which need to stay in the binary? The Cursor talk doesn’t claim everything should become a skill. It claims a specific 12,000-line feature should — and demonstrates the rewrite. The right question isn’t “should we adopt skills?” It’s “where is our 12K-LoC WorkTrees, and have we noticed yet?”

Further Reading on AgentConn

For more context on the broader skills ecosystem and the agents this thesis affects:

- Skills Directories Compared: mattpocock vs Codex vs pi-mono — the directory-side analysis

- obra/superpowers — Agentic Skills Framework Guide — the methodology layer

- cc-switch agent profile — the cross-runtime portability layer

- opencode agent profile — the OSS runtime that pairs with cc-switch