Vectorless RAG: PageIndex vs Embedding RAG Decision Guide

When to switch agent retrieval from embeddings to PageIndex's vectorless tree search — and when not to. The honest 2026 read.

On May 7, 2026, VectifyAI/PageIndex was the #4 trending repo on GitHub, picking up another 953 stars in a single day. The repository description is six words long and explains the entire bet:

Document Index for Vectorless, Reasoning-based RAG.

That sentence is doing a lot of work. Vectorless — no embedding model. Reasoning-based — the LLM picks where to look, instead of cosine similarity. Document Index — not a vector store. Each of those is a load-bearing rejection of the standard 2024 RAG playbook, and PageIndex isn’t alone: there’s a Microsoft Tech Community piece arguing the same case, a DigitalOcean tutorial titled “Beyond Vector Databases”, and LlamaIndex’s own April 2026 essay declaring “RAG is dead, long live agentic retrieval.” The framing has shifted under the industry’s feet.

For agent operators who hit the retrieval-quality wall — and almost everyone running production agents has, going by Simon Willison’s “Vibe coding and agentic engineering are getting closer than I’d like” — the question isn’t whether vectorless RAG is real. It’s whether to switch your agent’s retrieval architecture, and when. This guide is a decision framework, not a benchmark blowout: PageIndex on one side, embedding-based RAG (LlamaIndex, LangChain, the standard chunked-and-vectored stack) on the other, and a clear pattern for which workload picks which.

HN discussion: harness, not model, drives agent quality →

What “vectorless” actually means

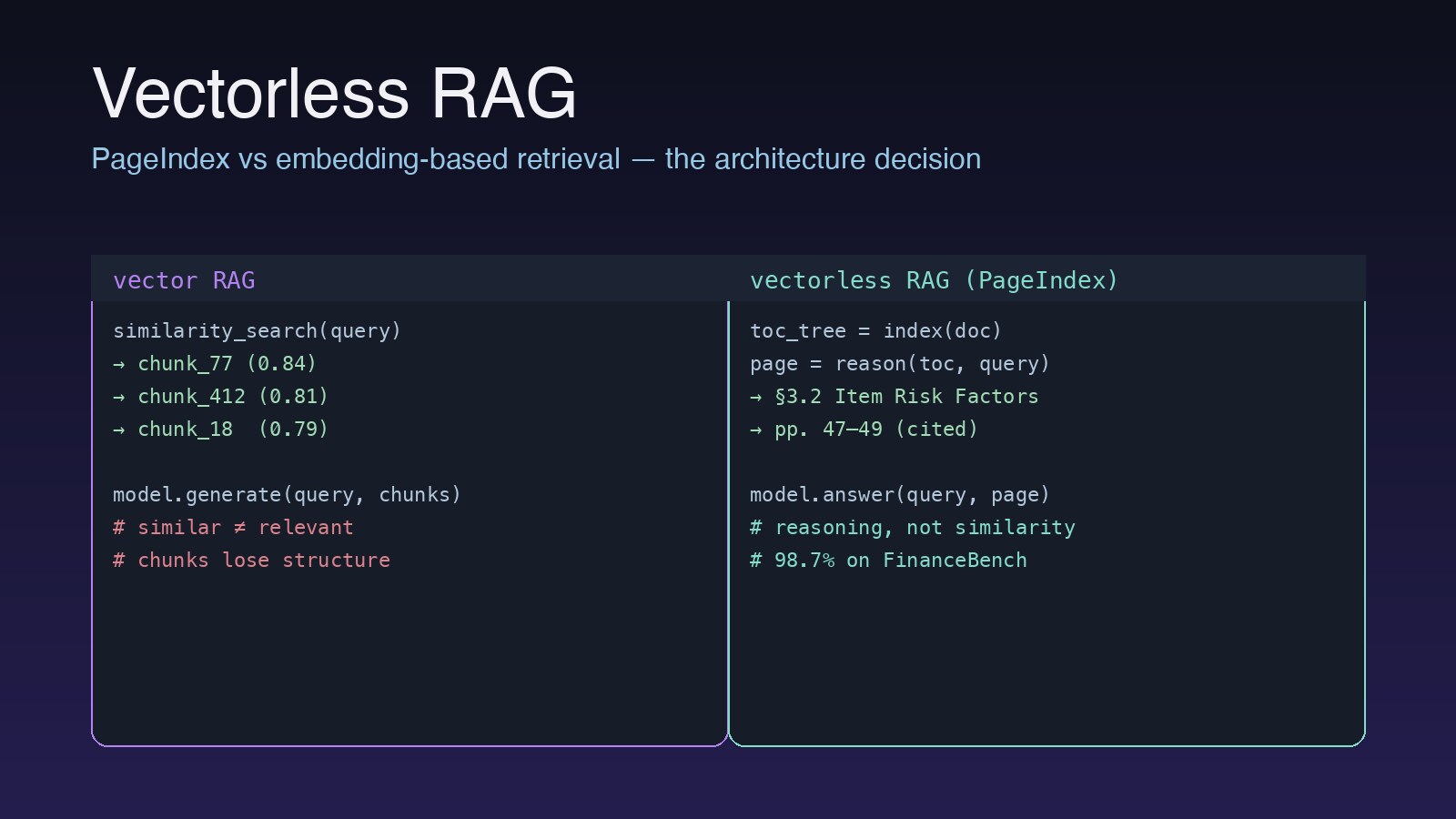

In 2024, “RAG” meant a specific recipe: chunk a document into ~500-token windows, embed each chunk with text-embedding-3-small or similar, store the vectors in Pinecone or pgvector or Chroma, run cosine similarity at query time, and stuff the top-k results into the LLM’s context. That recipe is what PageIndex and the broader “vectorless” movement are pushing back against — and the pushback is structural, not just performance-oriented.

PageIndex’s README and quickstart describe a two-stage pipeline that looks nothing like that:

- Index time: the LLM reads the document end-to-end and emits a table-of-contents tree — a hierarchical structure mirroring how a human expert would skim. Each node carries an optional summary, a page range, and pointers to children. There are no chunks, no embeddings, no vectors.

- Query time: the LLM does tree search. Given the question, it walks the ToC tree, expanding promising branches, ignoring irrelevant ones, and ultimately returning the specific section(s) that contain the answer. The retrieval is reasoning, not similarity.

The headline number from the project’s own introduction post is 98.7% accuracy on FinanceBench for a system called Mafin 2.5 powered by PageIndex — a benchmark where vector-based RAG systems typically land in the 70–85% range. The honest note: that benchmark is structured (long financial filings with explicit hierarchy), and PageIndex’s advantage compresses on less-structured corpora.

The brownfield-reckoning context

You can’t read PageIndex’s trajectory without reading the broader 2026 mood it’s emerging into. Today’s #1 HN story is Simon Willison’s “Vibe coding and agentic engineering are getting closer than I’d like” (722 points, 814 comments). The thesis: as agentic systems get better at generating code, the bottleneck shifts from generation to operating the resulting system. Most of those 814 comments are operators describing the same pain — agents that confidently retrieve the wrong context, then write code against it.

Naval on the demand-side dynamic →

This is the demand-side context that lifts vectorless RAG out of “interesting research” and into “real architectural decision.” In a greenfield project, top-k similarity over chunks is fine — your codebase is small, your docs are short, the failure modes are tolerable. In a brownfield project — the legacy monorepo with 200K-page internal docs and 15 years of Confluence pages — top-k similarity routinely returns the similar but wrong chunk: an older version of the same policy, a deprecated API, a discussion of the feature without the spec. PageIndex’s tree-search retrieval is specifically designed to handle that ambiguity, because reasoning over structure is exactly the operation an expert performs in that situation.

The same dynamic shows up in agent-harness discourse: Archon at 17.9K stars, agent-skills at 3K+ stars per day, cc-switch’s multi-agent panel. The pattern is operators reaching for more structured primitives across the entire stack, not just retrieval. PageIndex is the retrieval-layer instance of that pattern.

What you give up

The honest comparison requires stating the costs of going vectorless, because there are real ones.

1. Latency at scale. Tree search is sequential — each step is an LLM call deciding which branch to expand. For a 500-page document, you might pay 5–15 LLM calls at retrieval time vs one cosine similarity over a pre-computed index. PageIndex caches aggressively and the pageindex-mcp server helps, but the floor is still higher than vector lookup.

2. Multi-document degradation. A March 2026 Medium analysis from Vignesh Rajavelu and a parallel Towards Data Science piece on Proxy-Pointer RAG both surface the same finding: vectorless approaches perform best on single structured documents, while vector retrieval scales more reliably across noisy multi-document corpora. The published comparisons show vector RAG with ~+40% coverage improvements on multi-document tasks. If your agent retrieves across 50K heterogeneous PDFs, embedding-based retrieval still wins.

3. Production maturity. This is the one buyers most often miss. Datastreams’ Medium piece calls PageIndex “a promising paradigm shift…that isn’t quite ready for production”: no test coverage, beta status, no enterprise security features. That doesn’t make the idea wrong; it makes the open-source PageIndex implementation a research-grade dependency. The VectifyAI hosted cloud service at pageindex.ai is the production answer, with on-prem options for enterprise — but that’s now a vendor decision, not a “drop in an open-source library” decision.

4. Tooling integration. Your existing observability, evaluation, and orchestration stacks are built around the vector pipeline. Ragas integrates with LlamaIndex agents out of the box. Switching retrieval to PageIndex means writing eval harnesses that understand tree-search traces, not retrieval scores — that’s a real engineering cost.

HN: agent harness as a category →

The decision framework

Strip the framing and pick by workload. We’ve seen this break down cleanly along three axes:

| Workload axis | Pick PageIndex / vectorless | Pick embedding RAG |

|---|---|---|

| Document count | Few (1–100) long structured docs | Many (1K+) heterogeneous docs |

| Document structure | Strong hierarchy (TOC, sections, regs) | Flat or unstructured (chats, notes, web) |

| Query type | Multi-hop reasoning, in-document references | Single-hop factual lookup |

| Latency budget | Seconds-OK, accuracy-critical | Sub-second required |

| Citation needs | Page/section auditing required | Best-effort attribution |

| Domain | Finance, legal, regulatory, medical | Customer support, search, generic Q&A |

The decision becomes obvious once you frame it: vectorless RAG wins where the document already has the answer in a specific place and the work is finding it. Embedding RAG wins where similarity-over-many-chunks is the actual operation — semantic search, retrieval-augmented summarization, generic Q&A.

For most agent builders, the practical answer is both. Run vector retrieval as the high-recall first pass across your corpus, then run PageIndex on the document(s) it surfaces for the answer-extraction step. Your retrieval pipeline becomes hierarchical: vector → document candidates → tree search → cited section. This is roughly what LlamaIndex now calls “agentic retrieval”, and it’s why “RAG is dead” is the wrong framing — vector RAG is becoming the first stage of a longer pipeline, not the whole pipeline.

Implementation note: the harness fit

PageIndex slots into the agent stack at a specific layer. From the official cookbook, the canonical usage looks like this:

from pageindex import PageIndex

index = PageIndex.from_pdf("10K-2025.pdf") # builds the ToC tree once

result = index.query(

"What was Item 1A risk factor coverage on cybersecurity in 2024 vs 2025?",

model="gpt-5",

)

print(result.cited_pages) # e.g. [47, 48, 49]For agents, the pageindex-mcp server is the path of least resistance — it exposes PageIndex as an MCP tool, which means Claude Code, Cursor SDK harnesses, openclaw, and any of the substrates we covered in Cursor SDK vs Browserbase Skills vs OpenAI Apps SDK can call it the same way they call any other tool. No SDK rewrite, no architecture overhaul.

The Anthropic Skills pattern works particularly well here: wrap the PageIndex query as a skill, expose it to the agent harness, let the agent decide when to reach for it vs the cheap embedding fallback. We covered the broader Skills-as-runtime pattern in our Archon harness review — same idea, applied to retrieval.

HN Show HN: agent-skills curated bundle →

What we’d watch next

Two things will determine whether vectorless RAG sticks beyond the current trending-list moment:

1. The benchmark surface. FinanceBench is a great showcase but a single benchmark. Microsoft’s Vectorless RAG piece is a useful broadening — Azure-side validation that the pattern isn’t just a VectifyAI marketing hook — but the field needs a multi-document, multi-domain benchmark where vectorless approaches win on average, not just on hand-picked structured corpora.

2. The cost economics. Tree search costs more LLM calls per query. With Claude Sonnet pricing, that’s expensive; with DeepSeek V4 Flash at $0.14/M input tokens, it’s nothing. The vectorless RAG pattern and the cheap-frontier-model pattern are mutually reinforcing — the cheaper inference gets, the more the “burn an extra LLM call for better retrieval” tradeoff pays off. The fact that VectifyAI documents DeepSeek V4 as a supported model is not coincidence.

The honest read is that PageIndex is one of the most interesting retrieval primitives shipped this year, and the vectorless framing is going to outlast the specific implementation. If you’re building agents that retrieve from long structured documents — finance, legal, regulatory, medical, internal-policy — start the proof-of-concept this week. If you’re building generic semantic search, the embedding stack is still the answer, and you can ignore the discourse without losing anything.

The decision is workload-bound. Most architectural decisions are.