Archon Review: Open-Source AI Coding Harness Builder

Archon makes AI coding agents deterministic with YAML workflows. 17K stars, +452 today. Is 'harness engineering' a real category — or just retry logic?

Archon Review: The Open-Source Harness Builder That Makes AI Coding Agents Deterministic

On April 14, 2026, two of Hacker News’s top three AI items tackled the same underlying problem from different angles. A 93-point thread reframed multi-agent software development as a distributed systems problem — complete with verification gates, failure modes, and the need for consensus protocols between agents. Earlier in the week, a front-page post documented a striking result: the same LLM, wrapped in a structured harness, jumped from a 6.7% PR acceptance rate to nearly 70%. The only variable was the harness.

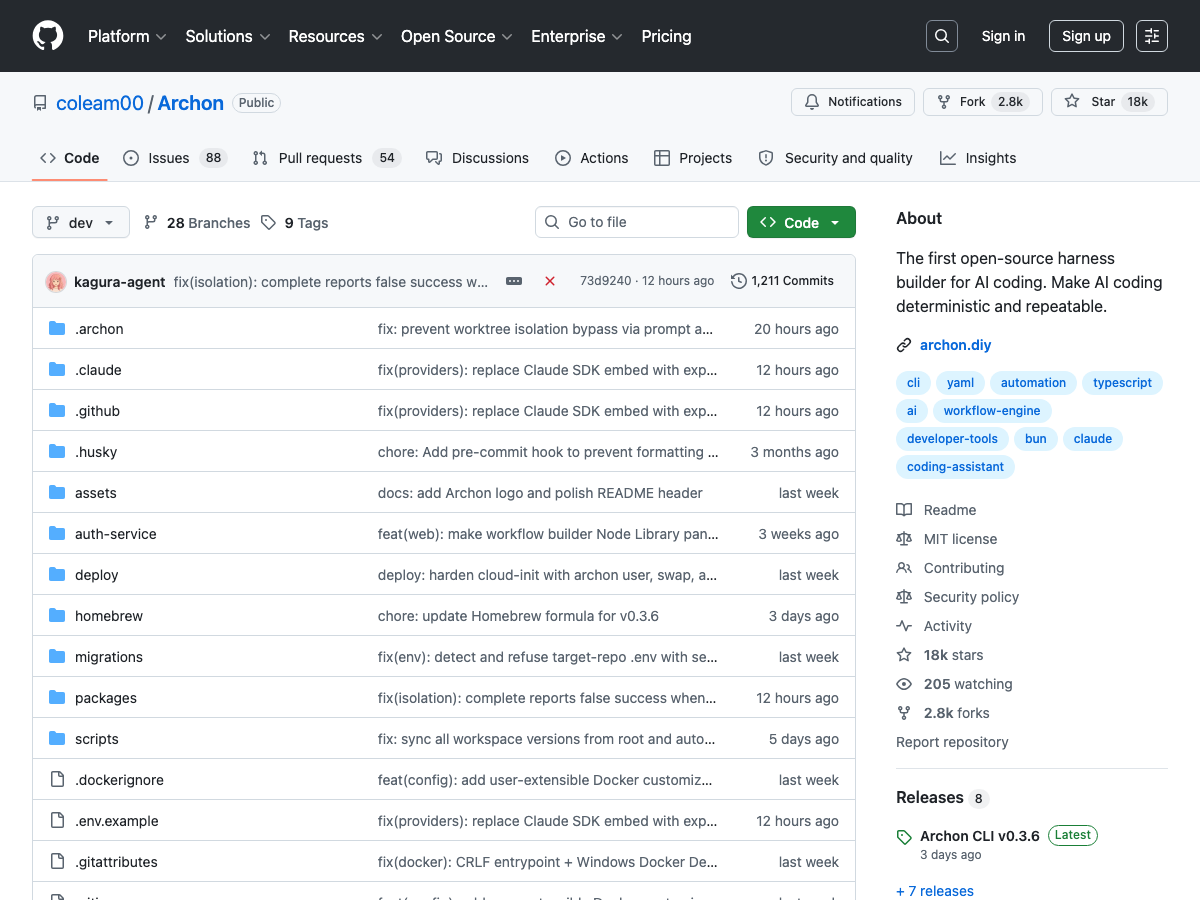

coleam00/Archon sits at the center of both conversations. With 17,906 GitHub stars and +452 today — currently listed in our Archon directory entry — it’s the most prominent open-source answer to the question: can you make AI coding agents deterministic?

This review covers what Archon actually does after its April 2026 complete rewrite, the architecture behind the “deterministic” claim, the honest read on the PR acceptance data, how it compares to multica and hermes-agent, and who should actually use it.

The Problem Archon Is Solving (and Why HN Noticed Today)

The HN reframing matters because it’s accurate. When you run multiple AI coding agents on a complex project, you’re not running solo tools — you’re running a distributed system. Tasks have dependencies. Failures in one stage cascade to others. You need verification between steps, not just at the end. You need idempotency: the same input should produce consistent behavior across runs.

That’s not how raw LLM-based agents work. Ask Claude Code to “fix this bug” and the execution varies every time. It might skip planning. It might skip tests. It might write a PR description that ignores your team’s template. Each run is shaped by the model’s sampling behavior — which is inherently non-deterministic.

Archon’s design premise: the AI is not the problem. The missing ingredient is structure that enforces consistent behavior before, between, and after AI execution. A harness.

What’s a harness? A system that wraps around a coding agent, automating the entire development lifecycle. It defines deterministic steps where precision matters, integrates AI-driven steps for complex reasoning, and includes verification loops that run until quality gates pass.

The PR acceptance rate claim makes this concrete. Studies cited by Archon’s community show raw LLM code achieves a 6.7% PR acceptance rate in production codebases. With a structured harness: nearly 70%. Same model. Different wrapper.

What Archon Actually Is (After the April 2026 Rewrite)

Understanding Archon requires knowing what it was versus what it is now.

The original Archon (v1) was a Python-based task management system with RAG capabilities — an agent that could help build other Pydantic AI and LangGraph agents by querying a knowledge base about those frameworks. Useful, but narrow in scope.

On April 7, 2026, Cole Medin (coleam00) announced a complete rewrite: the Python codebase was archived, and Archon emerged as a TypeScript workflow engine for AI coding agents. The framing shifted from “agent that builds agents” to “harness that makes agents reliable.”

The new Archon is described as: like what Dockerfiles did for infrastructure and GitHub Actions did for CI/CD — Archon does for AI coding workflows.

That analogy is well-chosen. Dockerfiles encode deployment environments as version-controlled, reproducible files. GitHub Actions encode CI/CD pipelines as YAML. Archon encodes coding workflows — plan, implement, test, review, PR — as YAML that runs consistently across every project and every developer.

Architecture Deep Dive: The DAG Model

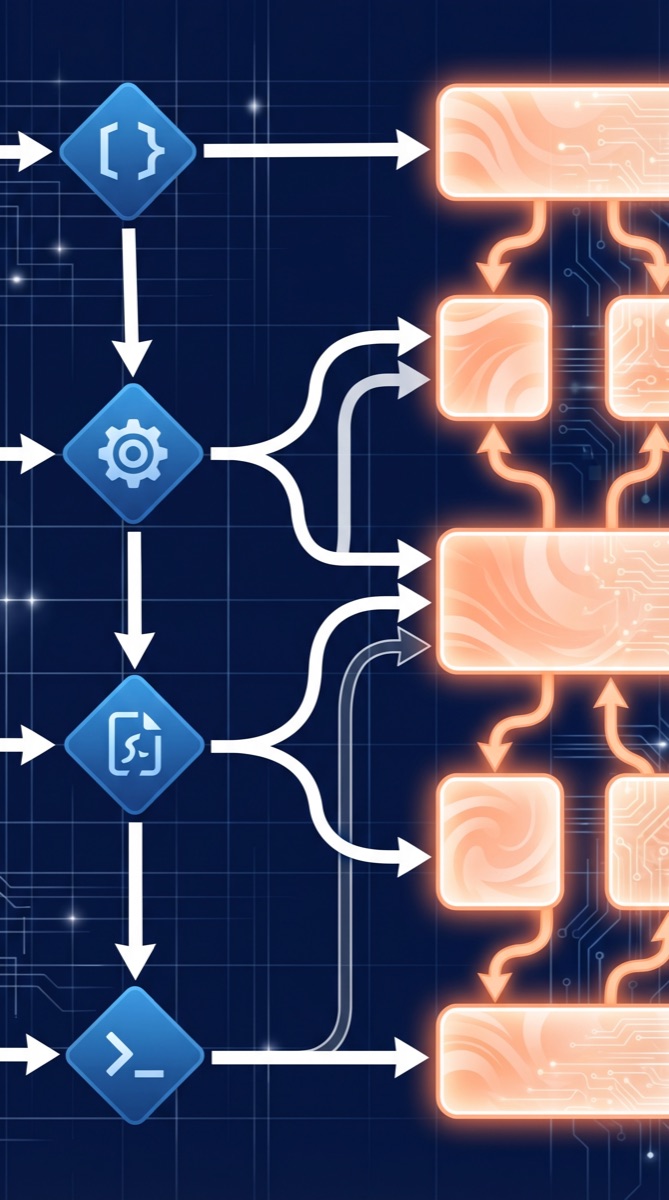

Archon’s core abstraction is the workflow — a YAML-defined directed acyclic graph (DAG) that mixes four types of nodes:

1. AI nodes: Prompt-based tasks that invoke Claude Code, Codex, or another LLM. type: ai, with a prompt field. This is where intelligence enters the workflow.

2. Bash nodes: Deterministic shell commands — running tests, linting, git operations. type: bash, with a command field. These nodes always behave identically given the same environment.

3. Loop nodes: Iteration logic with termination conditions. Useful for “run tests until passing” or “retry implementation until review passes.”

4. Dependency nodes: depends_on fields that enforce execution ordering. Like needs in GitHub Actions — you can’t run the review stage until implementation succeeds.

A minimal workflow definition looks like this:

name: bug-fix

nodes:

plan:

type: ai

prompt: "Analyze the bug report and create a fix plan"

implement:

type: ai

prompt: "Implement the fix according to the plan"

depends_on: [plan]

test:

type: bash

command: "npm test"

depends_on: [implement]

review:

type: ai

prompt: "Review the implementation against the bug report"

depends_on: [test]

pr:

type: bash

command: "gh pr create --title 'Fix: {bug_id}'"

depends_on: [review]Isolation via git worktrees: Each workflow run gets its own git worktree, enabling parallel execution without branch conflicts. This is what lets you run five agents on five bugs simultaneously without them stepping on each other — a key distributed systems property.

Platform adapters: Archon exposes the same workflows through a web dashboard, CLI, Telegram (5-min setup), Slack (15-min), and GitHub webhooks. The workflow is defined once and runs everywhere.

The key architectural insight: as MindStudio frames it, “The AI only runs where it adds value. Bash nodes handle the deterministic parts. Loop nodes handle retry logic. The harness structure doesn’t trust the AI to remember to run tests — it makes running tests mandatory.”

The 70% Claim: What the Data Actually Shows

The 6.7% → 70% PR acceptance rate improvement is Archon’s headline claim, and it’s worth unpacking honestly.

The full breakdown by task type shows:

- Maintenance tasks (documentation, CI config, build scripts): 74-92% acceptance with a harness

- Complex tasks (features, bug fixes, performance optimizations): 35-65% acceptance

The aggregate “70%” headline is technically accurate but blends two very different distributions. For straightforward, well-scoped work, a harness dramatically improves outcomes. For complex, novel problems — the kind where the AI’s reasoning is most uncertain — a harness helps but doesn’t solve the fundamental challenge.

This is the honest caveat: a harness is deterministic; the AI nodes inside it aren’t. What Archon provides is structured context and enforced checkpoints, which reduce variance by preventing the agent from skipping steps. But the quality of each AI step still depends on the model’s reasoning.

Frank’s World framing captures this well: “A harness is not a brain; it is an external orchestrator that dictates when and where the AI operates within a pre-defined YAML script.”

That’s the right mental model. Archon’s value is structural, not cognitive. It makes agents consistent, not correct.

The M×N Tool Calling Problem (and Why It Validates Archon’s Approach)

Today’s second top HN AI item — with 92 points — discussed the M×N tool calling problem: as agents gain access to more tools (N), and as the number of agents running simultaneously increases (M), the combinatorial space of possible execution paths explodes. Without structure, debugging a multi-agent failure requires tracing through an enormous state space of tool calls, model decisions, and partial outputs.

This is exactly the problem Archon’s DAG model addresses at the workflow level. When every run follows the same YAML-defined structure — same node sequence, same verification gates, same artifact outputs — debugging narrows dramatically. If the bug-fix workflow fails, you know it failed at test node (not review, not pr). The structure isn’t just for repeatability; it’s for observability.

Archon captures all execution history in a SQLite or PostgreSQL database: conversations, workflow runs, node-level outputs, and timing. This turns opaque agent behavior into auditable records — a property that becomes essential as agentic systems move from demo to production.

How Archon Compares to multica and hermes-agent

The open-source agentic ecosystem in April 2026 has three distinct bets on where multi-agent development breaks down. Archon, multica, and hermes-agent each represent one:

| Archon | multica | hermes-agent | |

|---|---|---|---|

| Core bet | Workflow structure | Team coordination | Agent learning |

| Architecture | YAML DAG harness | Orchestration layer above agents | 3-layer memory + skill persistence |

| Stars | 17K (+452 today) | 10K | 83K |

| Language | TypeScript | Python | Python |

| Problem solved | Inconsistent single-agent runs | No visibility across multiple agents | No institutional memory |

Archon vs multica: These are complementary, not competitive. Archon solves the within-agent problem: a single agent running a structured workflow reliably. Multica solves the between-agent problem: coordinating multiple agents, assigning tasks, preventing duplicate work, compounding skills. The power combination is Archon for deterministic execution + multica for team visibility.

Archon vs hermes-agent: These solve genuinely different problems. Hermes (83K stars) is about long-term agent improvement: it maintains session memory, persistent memory across runs, and skill memory that compounds over time. Its thesis is that the bottleneck is agent intelligence, not workflow structure. Archon’s thesis is the reverse: the model is capable enough; the missing ingredient is enforcement.

Archon vs superpowers/obra: The superpowers framework provides composable, reusable skills at the agent level. Archon provides reusable workflows at the project level. Skills (superpowers) can be embedded as nodes within an Archon workflow — they’re complementary layers.

Who Archon Is Actually For

Use Archon when:

- Your team has well-understood, repeatable coding workflows (bug fix lifecycle, migration pattern, PR template enforcement)

- You want to encode best practices as version-controlled YAML that runs identically across developers

- You’re dealing with AI agents that “forget” to run tests or skip your PR process

- You want parallel agent execution with git worktree isolation

Don’t use Archon when:

- Your work is primarily novel, open-ended exploration where there’s no repeatable structure to encode

- Your agents are used interactively (Archon is a batch/automated tool, not a chat interface)

- You just need task routing between agents — multica solves that without the workflow overhead

The power configuration (2026 practitioners are converging on this): Archon for workflow execution + multica for multi-agent coordination + Claude Code as the underlying intelligence. The layers don’t conflict because they solve different problems at different abstraction levels.

The Verdict: Is “Harness Engineering” a Real Category?

Yes — with caveats.

The HN framing is right: multi-agent systems are distributed systems, and distributed systems engineering is a real discipline with real tools. You need determinism where possible, verification gates between stages, isolation between concurrent processes, and repeatable deployments. Archon provides all of these for AI coding workflows.

The “deterministic” claim is real but limited. The structure is deterministic. The AI cognition inside the structure isn’t. Archon gives you a guarantee that the agent will follow the workflow — not that it will produce correct results at each step. That’s still more than you get without a harness, and the PR acceptance data validates the improvement.

The GitHub star velocity (+452 today, #2 on GitHub Trending) reflects genuine developer demand: as agentic coding tools become load-bearing infrastructure, reliability and repeatability become non-negotiable. Check the full landscape of open-source agent frameworks for context on where harness builders fit.

If you’re already using Claude Code or Codex for repetitive workflows, Archon is the layer that turns your prompting patterns into version-controlled, team-shareable engineering artifacts. That’s a real unlock — and 17K stars suggest the developer community agrees.

Sources: coleam00/Archon on GitHub · HN: Multi-Agentic as Distributed Systems (93pts) · HN: Only the Harness Changed · Stork.AI Archon review · MindStudio: What is Archon? · Frank’s World: Harness Engineering · ByteIota: YAML workflows · DEV.to: Archon #1 on GitHub · GitHub Issue #957: Complete Rewrite · AIToolly: Archon benchmark builder · OpenClaw API: Archon beginners guide