Tokenmaxxing: Codex + Claude Code Operator Stack 2026

YC named it tokenmaxxing — one founder + agent harness doing the work of 400 engineers. Here's the stack: Codex parallel tabs and Claude Code skills.

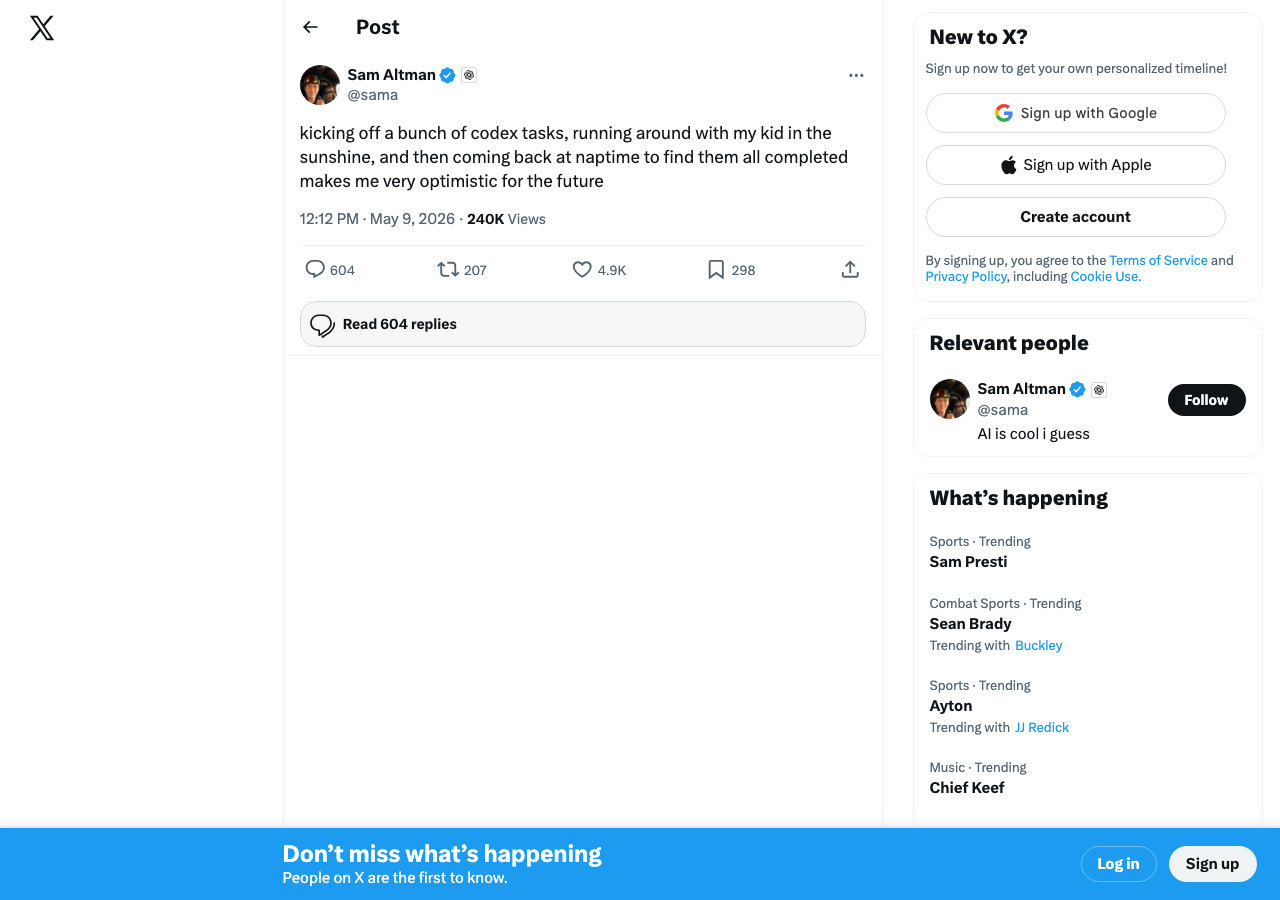

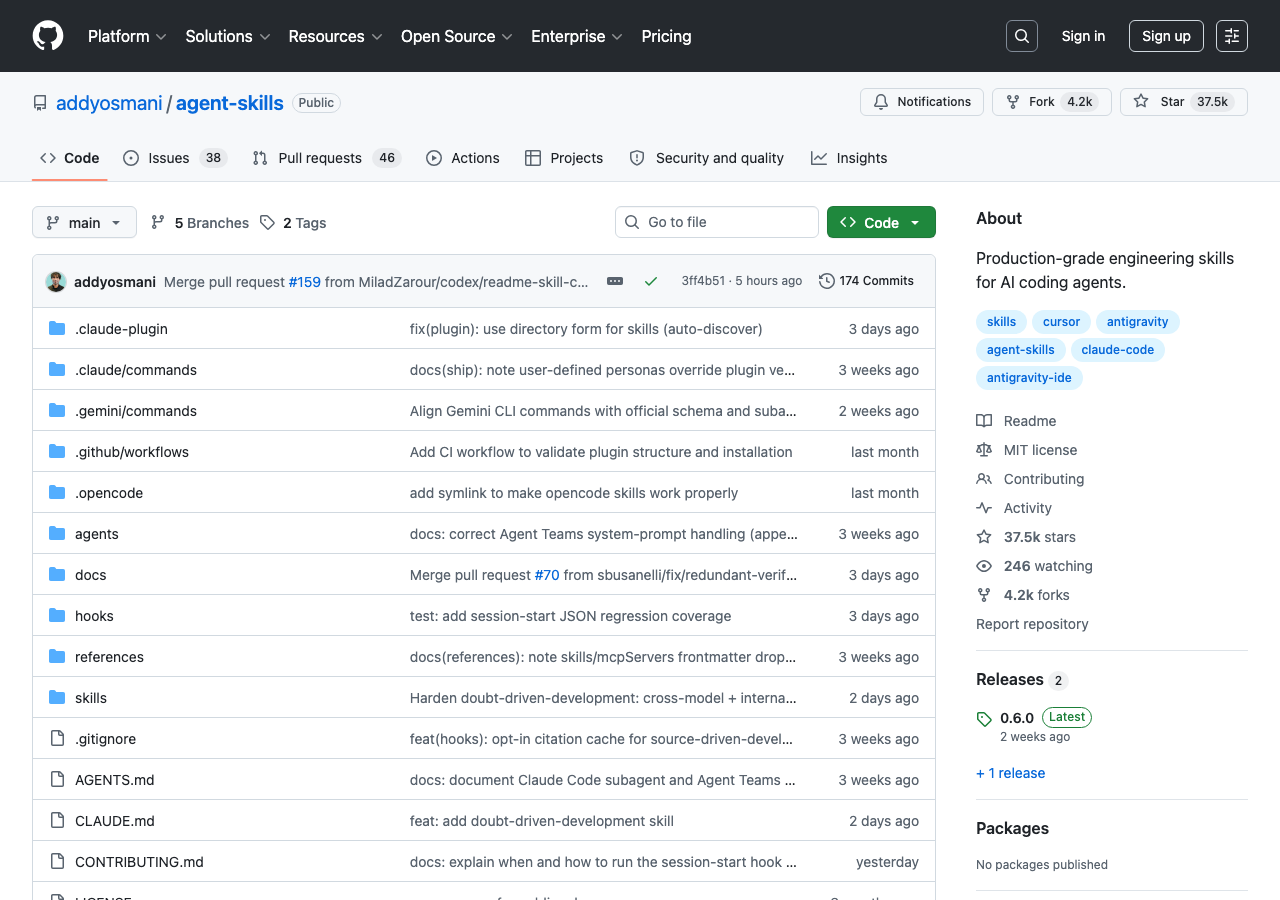

In May 2026, four independent surfaces named the same pattern in the same news cycle. YC Lightcone called it tokenmaxxing — one founder plus an agent harness doing the work of four hundred engineers. OpenAI shipped Codex into the browser with parallel-tab and background execution. addyosmani/agent-skills hit #1 on GitHub trending and accelerated on day three — climbing from 1,794 stars/day to 2,801, in a corner of the trending board where most repos halve. And Sam Altman — running the lab on the other side of the rivalry — tweeted that he was “kicking off a bunch of codex tasks, running around with my kid in the sunshine, and then coming back at naptime to find them all completed.”

That’s not four stories. It’s one story, surfacing on four surfaces at once.

The stack is named, and the operator class has stopped arguing about which CLI wins. They run both, in parallel, against the same task, and the harness picks the winner. The model isn’t the product anymore. The orchestration layer is. This piece is what that stack actually looks like, why “skills” became the unit of design, and what the new productivity primitive — tokens deployed per founder — replaces.

What “tokenmaxxing” actually means

The phrase comes out of YC’s Lightcone podcast — same hosts who, the week prior, named “Thin Harness, Fat Skills” as the operator pattern. Tokenmaxxing evolves the thesis: when the harness is good and the skills are good, the limiting reagent on a founder’s output isn’t engineering hours, it’s the rate at which they can deploy tokens against work. A founder who knows how to spin up parallel agent runs, dispatch them at the right scale, and merge the results back is — in YC’s framing — “doing the work of 400 engineers.”

That number is rhetorical. The framing isn’t. When a YC podcast names a productivity primitive, the term locks in for founder discourse for the next two quarters. Tokenmaxxing is the Q3 2026 vocabulary anchor — the same way “ramen profitable” was for 2010 and “default alive” was for 2018.

The mechanic is concrete. You don’t have one Codex window or one Claude Code tab. You have N of each, running in parallel, against the same problem, with the harness — your cc-switch or 9router or hand-rolled skill — routing tasks across them. Some operators run Codex with direct Chrome control editing arbitrary apps in headless tabs while Claude Code handles the canonical repo. Some run agents stitched through rtk to compress token-spend on common dev commands. The shape varies. The primitive — multiple agents, one human, one harness — does not.

Codex moves into the browser, and the rivalry becomes a stack

OpenAI’s Codex update is the load-bearing infrastructure for half this stack. The launch shipped three things together: direct Chrome control on macOS and Windows, parallel-tab work, and background execution. That last one is what Altman’s nap-time tweet is celebrating. You queue work, walk away, come back to results. The “codex tasks running while I’m with my kid” tweet is the consumer-facing version of the same primitive that YC is naming on the founder side.

The shift in creator coverage is the tell. Three weeks ago, AI YouTube was still picking sides — which CLI is winning? This week, David Ondrej walks through editing arbitrary apps via Codex, Chase AI is pushing the “Agentic OS” framing for Claude Code, and the convergence quote — “the model isn’t the product, the orchestration layer is” — shows up across both clusters in the same 24h. The creators stopped picking sides because the operators they cover stopped picking sides. You run both, you let the harness arbitrate, and the task — not the brand — picks the winner.

This is also the story behind Codex itself climbing onto the GitHub trending board this week (#10 blip, 367 stars). The repo trending alongside addyosmani/agent-skills isn’t a coincidence — it’s a market signal that operators are pulling Codex into stacks where Claude Code already lives.

Skills are the unit, and the day-3 climb proves it

addyosmani/agent-skills is the artifact that locks the pattern in. It’s a curated bundle of production-grade SKILL.md files — Addy Osmani’s claim is “these are the skills I actually use, not theoretical examples” — designed to drop into Claude Code, Cursor, or Antigravity. The repo trended yesterday at 1,794 stars/day in the #2 slot. Today it’s at 2,801 stars/day at #1.

That acceleration on day three is the part that matters. Most trending repos lose 50–70% of their velocity by day three. When a repo climbs on day-3, the underlying thesis is doing the work — the launch volume burned off and what remains is operators arriving on their own and starring it because they need it. The skills-as-unit framing has cleared the day-3 retention test. Treat that as the canonical proof point for Q3.

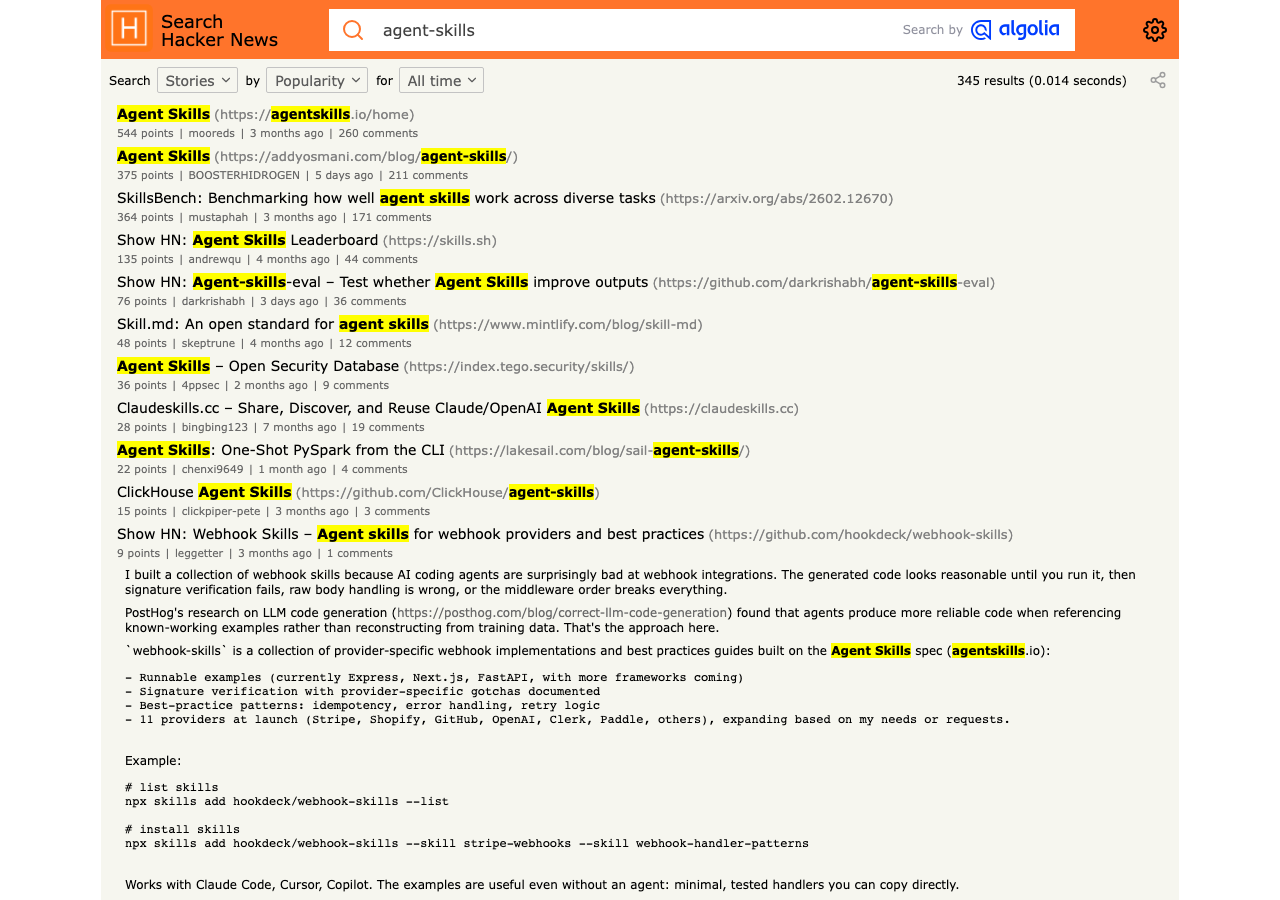

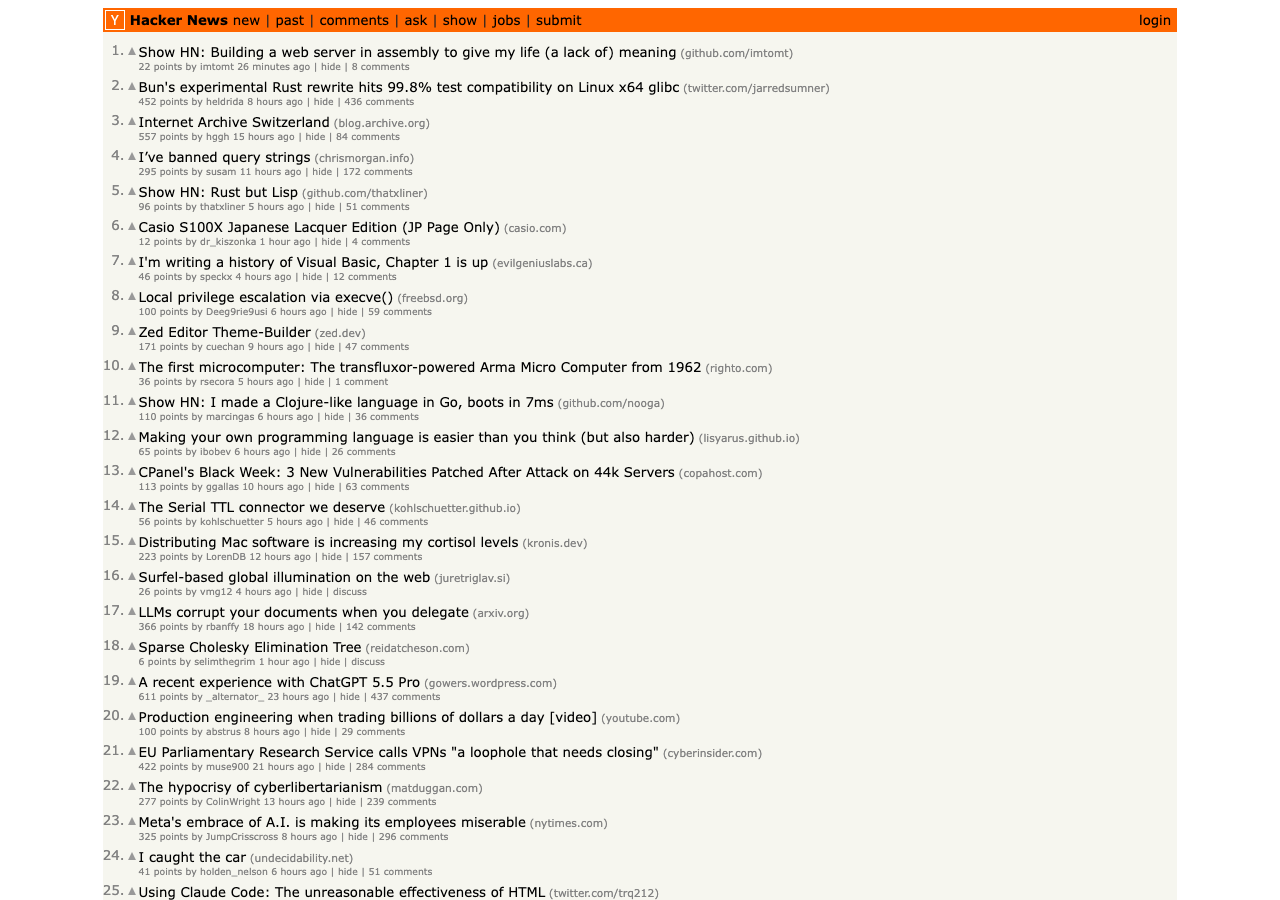

The HN side of the conversation tracks the same surface — agent-skills threads circulating across the engineering community in the same week the GitHub trending repo accelerated:

Why “skills” specifically? Because operators have figured out — across the obra/superpowers skills-framework primer, the skills-directory race, and addyosmani’s curation — that prompts don’t compose; skills do. A prompt is a single instruction; a skill is a named, file-located, reusable behavior with explicit inputs and outputs. You can git diff a skill. You can review it in PR. You can compose it with another skill. You can hand it to another founder and they can run it. None of that is true of a clever paragraph in a prompt window.

Nate B Jones nailed the corollary: prompt skill is commoditized; packaged, repeatable workflows are where the value pool sits now. The skills-as-unit framing is the productization gap closing.

💡 The HN deep cut. Yesterday’s HN front page carried “Control Flow > Prompts” (557 pts) — the engineering-side version of the same insight. When the founder discourse and the engineering discourse name the same primitive on consecutive days from independent surfaces, that’s the term that sticks.

The harness layer fills in around it

A skills directory is necessary but not sufficient. You also need a harness that can route work across multiple agents, switch between providers when one rate-limits, and clean up token spend. This week’s GitHub trending board is unusually clean about this — the harness layer is all over it.

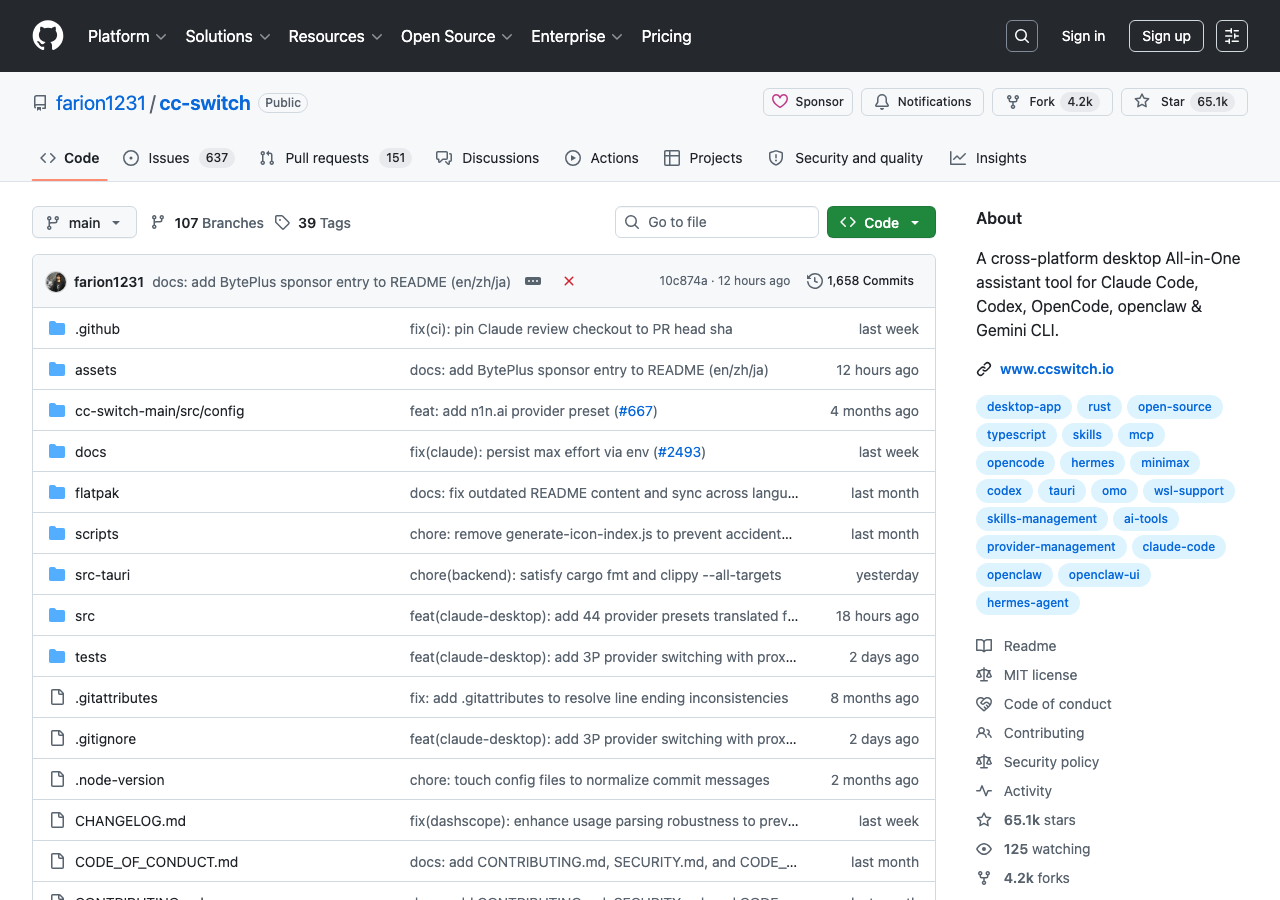

- farion1231/cc-switch at #3 is the all-in-one assistant tool for Claude Code, Codex, OpenCode, and Gemini CLI — the multi-agent CLI switcher itself, holding 1,238 stars/day on day three.

- decolua/9router at #4 connects Claude Code, Codex, Cursor, Cline, Copilot, and Antigravity to forty-plus free providers, with auto-fallback and 40% token reduction claimed.

- rtk-ai/rtk at #9 — Rust-binary CLI proxy that filters command outputs to cut token consumption 60–90% on common dev workflows.

- openai/codex at #10 — the agent itself, trending in the same week as everything else that wants to plug into it.

These four repos trending in the same week are not a coincidence. They are a category materializing in front of you: the harness is now its own market, complete with multi-CLI switchers, multi-provider routers, and token-economics middleware. The “thin harness, fat skills” thesis isn’t a metaphor — it’s a literal shape that GitHub’s trending board is currently showing.

Anthropic’s growth is the shadow that the stack casts

If a single founder running tokenmaxxing can do the work of 400 engineers, the labs that enable that workflow grow disproportionately. Latent Space’s morning headline this week — “Anthropic growing 10x/year while everyone else laying off >10%” — is the macro shadow this operator pattern casts. Five surfaces locked it in: Substack ($15B ARR, $1–1.2T secondary), Reddit (“Meta Is Dying” at 23.8K pts, Truth Social $400M loss, Oracle severance refusal), Tech YouTube All-In’s “Anthropic monopoly?” panel at 220K views in 19h, AI YouTube TheAIGRID’s “OpenAI Is Losing The AI War,” and Polymarket — Anthropic at 94% on best Coding AI model end-of-May, up 9% week-over-week.

⚠️ The framing is internally consistent across surfaces. When one founder + harness = 400 engineers, the labs that scale that workflow grow 10x while everyone else lays off. That’s the macro story Substack, Reddit, Polymarket, and YC are all telling — same shape, four different vocabularies.

The Codex-in-browser launch fits inside this frame, not against it. OpenAI is also shipping the harness primitives. They’re not losing — they’re playing the same game with a different surface. But Polymarket’s pricing on coding-specific models tells you who the operator class currently treats as the default substrate. The 94% line on coding AI is what tokenmaxxing in production looks like as a market price.

What founders should actually do this week

If you’re the kind of founder this discourse is being built for, here is the operator-grade playbook this week:

- Install addyosmani/agent-skills and use it as the seed. Read the SKILL.md files. Copy the ones that fit your domain. Write the ones that don’t. The unit you’re learning isn’t a prompt — it’s a named, reviewable, composable file.

- Pick a harness that lets you run multiple agents in parallel. Either roll your own with cc-switch or use 9router for the multi-provider angle. The point is one human, multiple agents, parallel work. If you’re still in single-tab mode, you’re not tokenmaxxing — you’re prompting.

- Run Codex’s parallel-tab + background execution against your messiest task. Queue it, walk away, come back. If the result is good, you’ve found a workflow that benefits from the new primitive. If it’s bad, you’ve found a workflow that needs more skill engineering. Both are useful.

- Read the harness-comparison piece to calibrate which CLI sits where in your stack. You probably want both running. The harness-level question is which-for-what, not which-overall.

- Read obra/superpowers — the skills-framework primer if you want to understand the genre theoretically, then the skills-directory race for the competitive landscape across curated bundles.

✨ The honest constraint. None of this works if your codebase isn’t structured for it. Tokenmaxxing exposes architectural mess — when you fan out to 8 parallel agents, the ones working in well-bounded modules finish; the ones working in spaghetti come back asking for clarification. Your harness amplifies your repo’s structure, for better and worse.

What the operator pattern displaces

The pattern displaces three older shapes:

- The single-CLI religious war. “I use Cursor / I use Claude Code / I use Codex” as identity is over. The operator runs all three and routes tasks across them. The vocabulary is now stack-shaped, not brand-shaped.

- Prompt engineering as a discipline. Promptcraft was the 2024 frontier; skill engineering — file-located, composable, reviewable — is the 2026 successor. The HN piece on “control flow > prompts” is the engineering-side validation. The skills-directory race is the supply-side market response.

- “How many engineers” as the unit of company size. When one founder + harness = 400 engineers, the metric inverts. The number that matters is tokens deployed per founder per week, not headcount. This is what Sam Altman’s nap-time tweet is implicitly tracking. He’s measuring output, not hours.

The capability-recognition lag

There’s a version of this story where you read this in May 2026 and think interesting, but my workflow is fine. That’s the same response operators had to “thin harness, fat skills” three weeks ago. The pattern has now had three weeks to compound — and the GitHub board, the YC vocabulary, the Anthropic growth chart, and the Polymarket coding-AI line are all showing the same shape.

The capability-recognition lag is real. Diamandis’ “GPT 5.5 silently matches Mythos” is the consumer-facing version of the same lag — a frontier model doing more than people noticed. Tokenmaxxing is the operator-side version. By Q3, the founders who set up the harness in May will be the ones explaining it on podcasts. The ones who waited will be the ones the podcasts are about.

The stack is named. The skills are public. The harnesses are trending. The infrastructure is one launch behind. What’s left is the part nobody can outsource — actually wiring it together, against your own work, this week.

Primary sources: YC Lightcone • OpenAI Codex launch • addyosmani/agent-skills • @sama • David Ondrej • Chase AI • Nate B Jones • openai/codex • cc-switch • 9router • rtk • Diamandis • All-In Pod • Polymarket.