GSD 2 vs Claude Code vs Codex CLI: 2026 Comparison

GSD 2, Claude Code, and Codex CLI compared head-to-head. Architecture, autonomy, pricing, and git workflow — which coding agent CLI fits your workflow?

The coding agent CLI war just got a third contender — and a possible fourth.

GSD 2 (Get Stuff Done) launched this week as a standalone CLI tool, breaking free from its origins as an orchestration layer inside Claude Code. It hit 410 points on Hacker News within hours. Meanwhile, Mistral quietly dropped Forge at 669 points — signaling that even European AI labs want a piece of the developer tooling market.

If you write code in a terminal in 2026, you now have real choices. Claude Code, Codex CLI, and GSD 2 each take fundamentally different approaches to the same problem: turning a natural language prompt into working, committed code. This guide breaks down what actually matters when choosing between them.

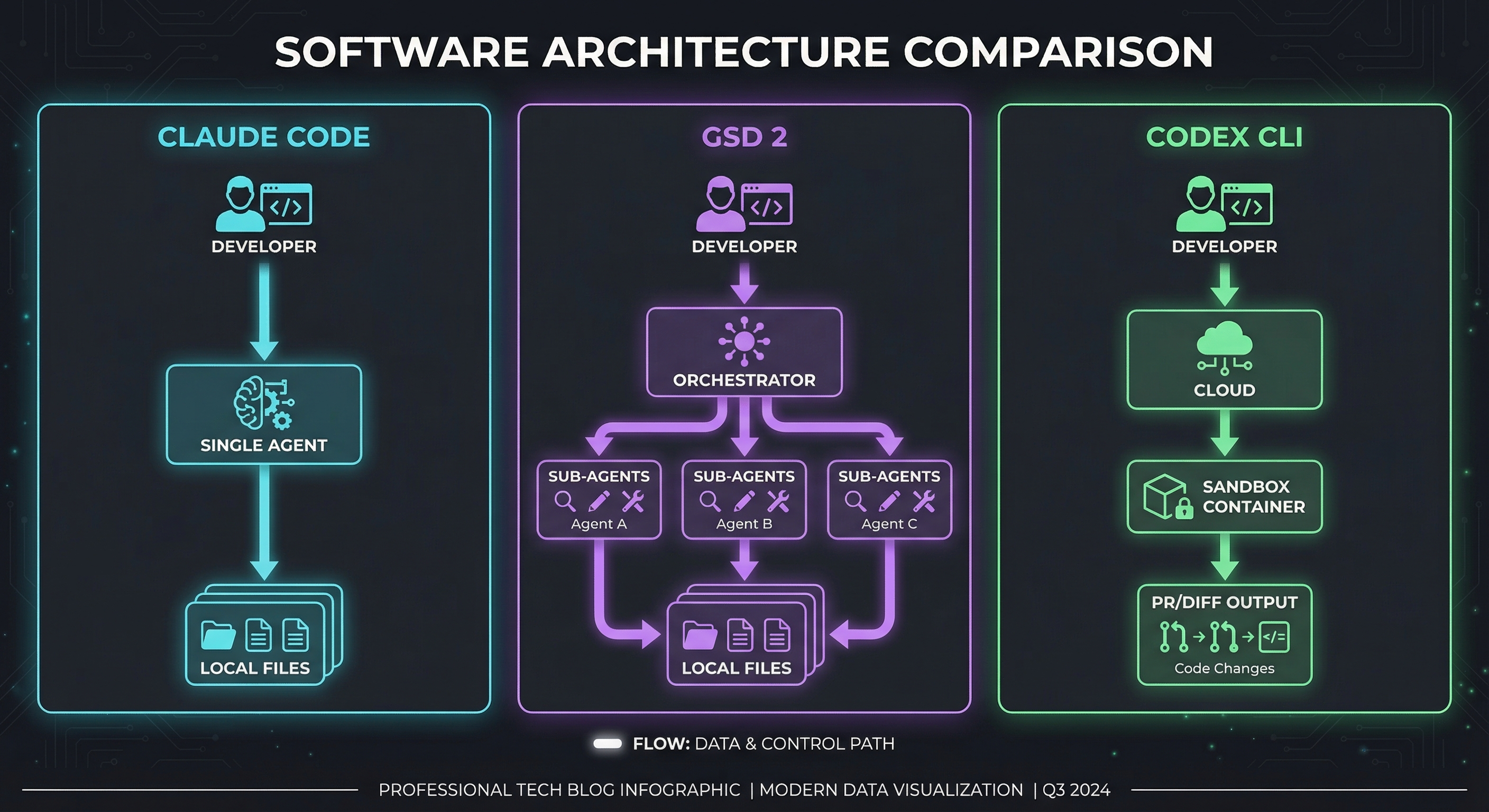

The Three Architectures, Explained

Before comparing features, you need to understand that these tools are built on genuinely different philosophies. This is not three wrappers around the same API.

Claude Code: The Direct Agent Model

Claude Code by Anthropic operates as a single, powerful agent running directly in your terminal. You describe what you want. Claude reads your codebase, reasons about the changes, edits files, runs commands, and iterates until the task is done.

The mental model is simple: one agent, one context window, direct execution. Claude Code leverages Anthropic’s extended thinking capability to reason through complex multi-file changes before writing a single line. It sees your entire project structure, understands your conventions, and produces code that looks like a senior developer wrote it — someone who actually read the existing codebase first.

Strengths of this approach:

- Minimal abstraction between you and the model. What you say is what gets executed.

- Extended thinking means complex architectural decisions are reasoned through, not guessed at.

- Deep git integration — Claude Code understands your commit history, branch structure, and can produce clean, atomic commits.

- Works with your existing editor. It is a terminal tool, not an IDE replacement.

Where it struggles:

- Large tasks can exhaust the context window. When a feature touches 30 files across multiple subsystems, a single context window becomes a bottleneck.

- No built-in task decomposition. You are the orchestrator — you decide when to break a big task into smaller ones.

- Token costs on complex tasks can add up quickly, especially with extended thinking enabled.

GSD 2: The Meta-Prompting Orchestrator

GSD 2 takes the opposite approach. Instead of one powerful agent doing everything, it is an orchestration system that decomposes your goal into context-window-sized tasks, then dispatches sub-agents to execute each one.

The origin story matters here: GSD started as a set of custom prompts that lived inside Claude Code. Version 2 rebuilt it as a standalone CLI on Anthropic’s PI SDK (Platform Integration SDK). It is, architecturally, a harness that sits on top of an AI model — currently Claude — and manages the complexity that the model alone cannot handle.

The iron rule of GSD: every task must fit within a single context window. If a task is too large, the system breaks it down further. This is the core insight that makes it work for big projects where raw Claude Code starts to degrade.

How the workflow actually works:

/gsd:new-project— The system interviews you about your idea until it understands goals, constraints, tech preferences, and edge cases. It spawns parallel research agents to investigate the domain.- It generates a structured spec:

PROJECT.md,REQUIREMENTS.md,ROADMAP.md, andSTATE.md. /gsd:discuss-phase— Before building, GSD identifies gray areas in each phase and asks you to resolve them. Visual features? It asks about layout density and empty states. APIs? Response format and error handling./gsd:research-phase— Parallel agents investigate implementation approaches./gsd:plan-phase— Produces a detailed execution plan grounded in your spec and research./gsd:build-phase— Executes the plan, task by task, with each task scoped to fit one context window.

Strengths of this approach:

- Solves “context rot” — the quality degradation that happens as any LLM fills its context window. By scoping each task to one window, output quality stays consistent.

- Spec-driven development means the AI understands the big picture even when working on small pieces.

- Auto mode allows fully autonomous project builds. Give it one prompt, walk away.

- Clean git history — each completed task gets its own commit.

Where it struggles:

- Burns significantly more tokens than direct Claude Code usage. Multiple HN commenters reported 10x token consumption.

- The spec-driven approach adds overhead that may not be worth it for small tasks or quick fixes.

- Currently still depends on Anthropic models under the hood — GSD 2 runs on the PI SDK, which means Claude is the engine.

Codex CLI: The Cloud Sandbox Model

OpenAI’s Codex CLI takes a third approach: every task runs in an isolated cloud sandbox. When you give Codex a task, it spins up a container with your repo, lets the agent work in isolation, and returns the result as a diff or pull request.

This sandboxing model has a unique advantage: the agent literally cannot break your local environment. It can install dependencies, run tests, even execute destructive commands — all within a disposable container that gets thrown away after the task completes.

Strengths of this approach:

- Safety by isolation. The agent cannot corrupt your local files, install unwanted packages, or accidentally

rm -rfsomething important. - Parallel execution is natural — spin up multiple sandboxes for multiple tasks simultaneously.

- Tight integration with OpenAI’s model ecosystem, including the new GPT-5.4 Mini at 30% quota cost for sub-agent delegation.

- Cloud-based execution means your local machine is not doing the heavy lifting.

Where it struggles:

- Requires network connectivity. No internet, no Codex.

- The sandbox does not have your local environment’s quirks — custom toolchains, internal package registries, VPN-dependent services.

- Latency. Spinning up a container, cloning your repo, and running the task adds overhead that local tools avoid.

- Less “feel” for your codebase compared to tools that run directly in your project directory.

Head-to-Head Comparison

| Feature | Claude Code | GSD 2 | Codex CLI |

|---|---|---|---|

| Architecture | Direct agent | Meta-prompting orchestrator | Cloud sandbox |

| Runs locally | Yes | Yes | No (cloud containers) |

| Task decomposition | Manual | Automatic (spec-driven) | Semi-automatic |

| Context management | Single window | One task = one window | Sandbox-scoped |

| Git integration | Deep (branch, commit, diff) | Auto-commit per task | PR/diff output |

| Autonomy level | Medium (interactive by default) | High (auto mode available) | High (async execution) |

| Multi-file editing | Excellent | Excellent (via sub-agents) | Good |

| Underlying model | Claude 4.6 Opus/Sonnet | Claude (via PI SDK) | GPT-5.4 / Mini / Nano |

| Offline capable | No (API-dependent) | No (API-dependent) | No |

| Best for | Complex reasoning tasks | Large greenfield projects | Safe, isolated execution |

Pricing: The Real Math

Pricing is where theoretical comparisons meet your actual credit card.

Claude Code operates on usage-based API pricing through Anthropic, or is included with Claude Pro ($20/month) and Max ($100-200/month) subscriptions. For heavy usage, Max is the rational choice — but be warned that GSD 2’s auto mode can burn through Max plan limits quickly if left unattended. Multiple creators have flagged the risk of account issues when using automated tools against consumer subscription plans.

GSD 2 uses the same underlying Anthropic API, so the raw model costs are identical to Claude Code. However, GSD’s meta-prompting approach consumes significantly more tokens per feature. The parallel research agents, spec generation, and task decomposition all use tokens. HN users report roughly 10x token usage compared to steering Claude Code directly. The tradeoff: higher token cost, but potentially fewer failed attempts and less time spent re-prompting.

Codex CLI runs on OpenAI’s pricing. The new GPT-5.4 models create an interesting cost optimization: use full GPT-5.4 for planning (expensive, smart) and Mini at 30% quota for execution (cheap, fast). This tiered approach can bring per-feature costs below Claude-based tools for well-structured tasks.

Bottom line: For a typical mid-size feature (touching 5-15 files), expect roughly:

- Claude Code: $0.50–$3.00 depending on complexity and thinking depth

- GSD 2: $3.00–$15.00 (more tokens, but potentially done in one pass)

- Codex CLI: $1.00–$5.00 (with Mini delegation optimizing the expensive parts)

The Autonomy Spectrum

These tools sit at different points on the autonomy spectrum, and your preference here probably determines your choice more than any feature comparison.

Claude Code is, by default, interactive. It asks before making changes, shows you diffs, and waits for approval. You can run it with --dangerously-skip-permissions for more autonomy, but the design philosophy is collaborative: human and agent working together.

GSD 2 is designed for fire-and-forget autonomy. The entire workflow — from spec generation through implementation — can run without human intervention in auto mode. The system front-loads human input (the discussion and approval phases) so that execution can be fully autonomous. This is philosophically different: invest more time upfront in specification, get hands-off execution.

Codex CLI enables async autonomy by design. Give it a task, let it run in the cloud, come back later to review the PR. This is the closest to “AI teammate” rather than “AI pair programmer” — it works while you do other things.

Git Workflow Integration

For professional developers, how a tool handles git is not a nice-to-have — it is the workflow.

Claude Code has the deepest native git understanding. It reads your commit history to understand conventions, creates branches, produces atomic commits with meaningful messages, handles merge conflicts, and can even write PR descriptions. Because it runs in your actual repo, every git operation is real and immediate.

GSD 2 produces clean git histories by design. Each completed task phase gets its own commit with a descriptive message. The spec-driven approach means commit messages map to roadmap items, giving you a readable history of what was built and why. However, since GSD orchestrates sub-agents, the git operations are automated — less flexibility for custom branching strategies.

Codex CLI outputs diffs and pull requests rather than committing directly to your repo. This is a safety feature: you review the PR before any code reaches your main branch. For teams with strict code review policies, this fits naturally into existing workflows. The downside is the extra step — you cannot just git push and move on.

See Them in Action

For a hands-on comparison of how these tools perform on real coding tasks, this walkthrough covers the strengths and trade-offs of each CLI:

The Fourth Player: Mistral Forge

Mistral’s Forge launched this week to 669 points on Hacker News — more engagement than GSD 2. But Forge is not quite a coding agent CLI in the same category. It is an enterprise platform for training custom AI models on proprietary codebases and data.

Why does it matter here? Because Forge represents a different thesis about developer tooling: instead of using a general-purpose model with clever orchestration (GSD) or powerful prompting (Claude Code), train a model that already understands your code.

Forge supports pre-training on internal codebases, post-training for specific tasks, and reinforcement learning aligned to internal policies. The agent-first design means their autonomous coding agent, Mistral Vibe, can use Forge to fine-tune models, find optimal hyperparameters, and generate synthetic data — all through natural language.

For now, Forge is an enterprise play (partnerships with ASML, Ericsson, European Space Agency). But the underlying bet — that domain-specific models will outperform general models with fancy prompting — could reshape the coding agent landscape if it proves out. Keep an eye on Devstral 2, their dedicated coding model, as it matures.

The Open-Source Alternative

Worth noting: LangChain just published a reference architecture for building your own Claude Code equivalent entirely on open-source components. The stack: Nvidia’s Neotron 3 Super as the model, OpenShell as the secure runtime, and Deep Agents as the harness. The architecture decomposes into the same model → runtime → harness → agent pattern that all three commercial tools use.

This matters because it means the architecture is no longer proprietary — it is a documented, reproducible pattern with interchangeable components at every layer. If you want full control (and are willing to handle the setup), you can build a competitive coding agent CLI without paying API costs to anyone.

For a deeper look at self-hosted options, see our guide to the best self-hosted AI agents in 2026.

Which One Should You Actually Use?

After testing all three, here is the honest recommendation:

Choose Claude Code if you are an experienced developer who wants a powerful pair programmer in your terminal. You prefer to stay in control, you are comfortable decomposing large tasks yourself, and you value deep reasoning over automation. Claude Code is the best tool for complex, architecturally significant changes where getting the reasoning right matters more than getting it done fast.

Choose GSD 2 if you are building a greenfield project or large feature and want to describe what you want, then walk away. GSD is the right choice when the project is big enough that context rot becomes a real problem, and you are willing to pay more in tokens for more reliable output. Solo developers and small teams building new products will find the spec-driven approach particularly valuable.

Choose Codex CLI if safety and isolation matter most — you are working on production codebases, you need async execution that does not block your workflow, or your team has strict PR-based review processes. The cloud sandbox model is also the right choice if you want to run multiple tasks in parallel without worrying about conflicts.

What Comes Next

The coding agent CLI space is consolidating around a shared architecture (model + runtime + harness + agent) while differentiating on philosophy. GSD 2 bets on specification and decomposition. Claude Code bets on raw reasoning power. Codex CLI bets on isolation and safety. Mistral Forge bets on domain-specific models.

The real question is not which tool wins — it is whether the standalone CLI model survives at all. IDE-integrated agents like Cursor and GitHub Copilot keep getting more powerful, and most developers spend their time in editors, not terminals. The CLI tools need to prove that the terminal-native workflow offers something the IDE cannot: deeper autonomy, better orchestration, and tighter integration with the Unix philosophy of composable tools.

For now, the CLI coding agents are the sharpest tools in the shed. Use them while they are ahead.

For a broader overview of AI coding tools, see our complete guide to AI coding agents and top 10 AI coding agents in 2026.