UI-TARS-desktop Review: ByteDance’s Visual Agent

ByteDance's UI-TARS-desktop hit GitHub trending #6 inside the Chinese-AI surge week. How the visual-agent stack stacks up against Claude and Operator.

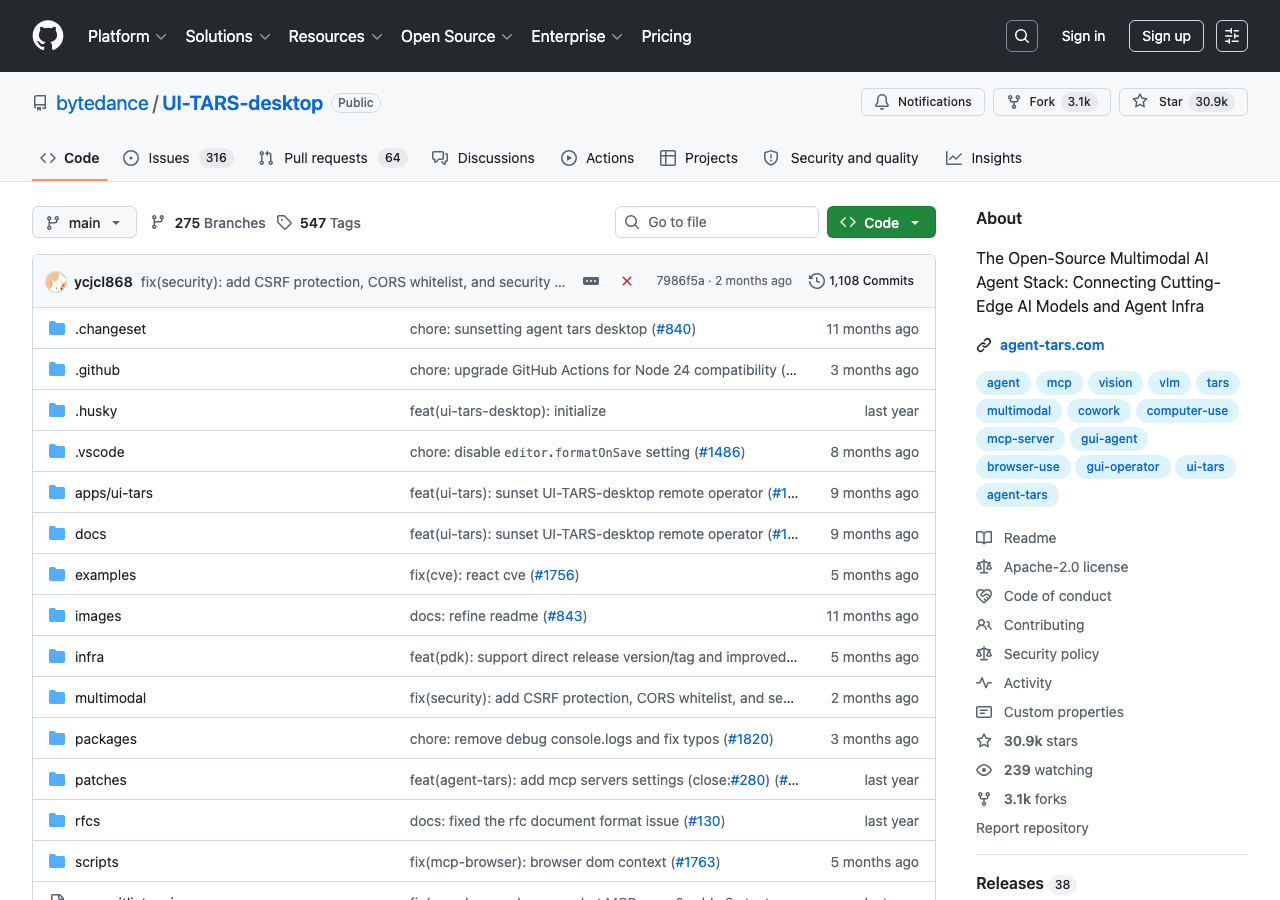

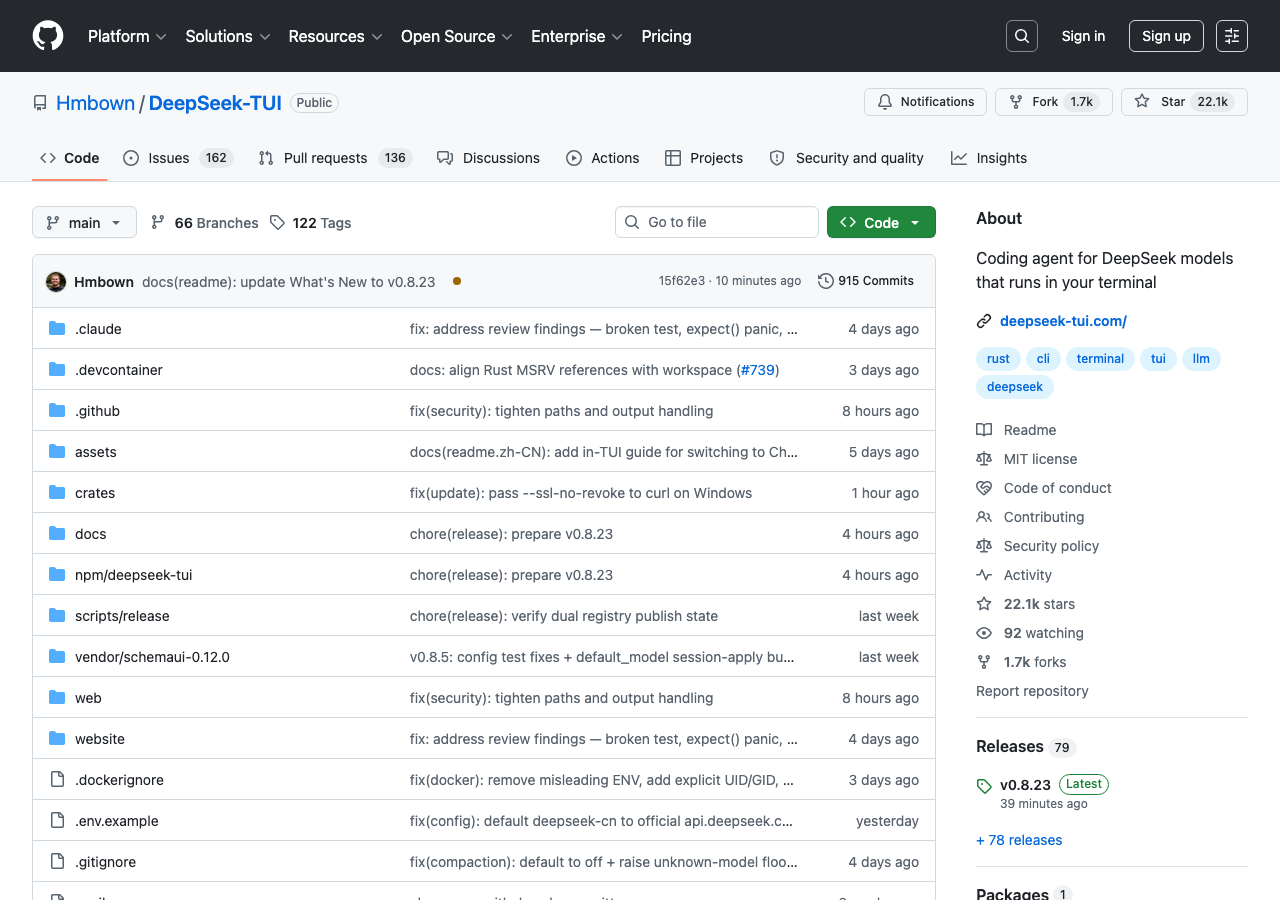

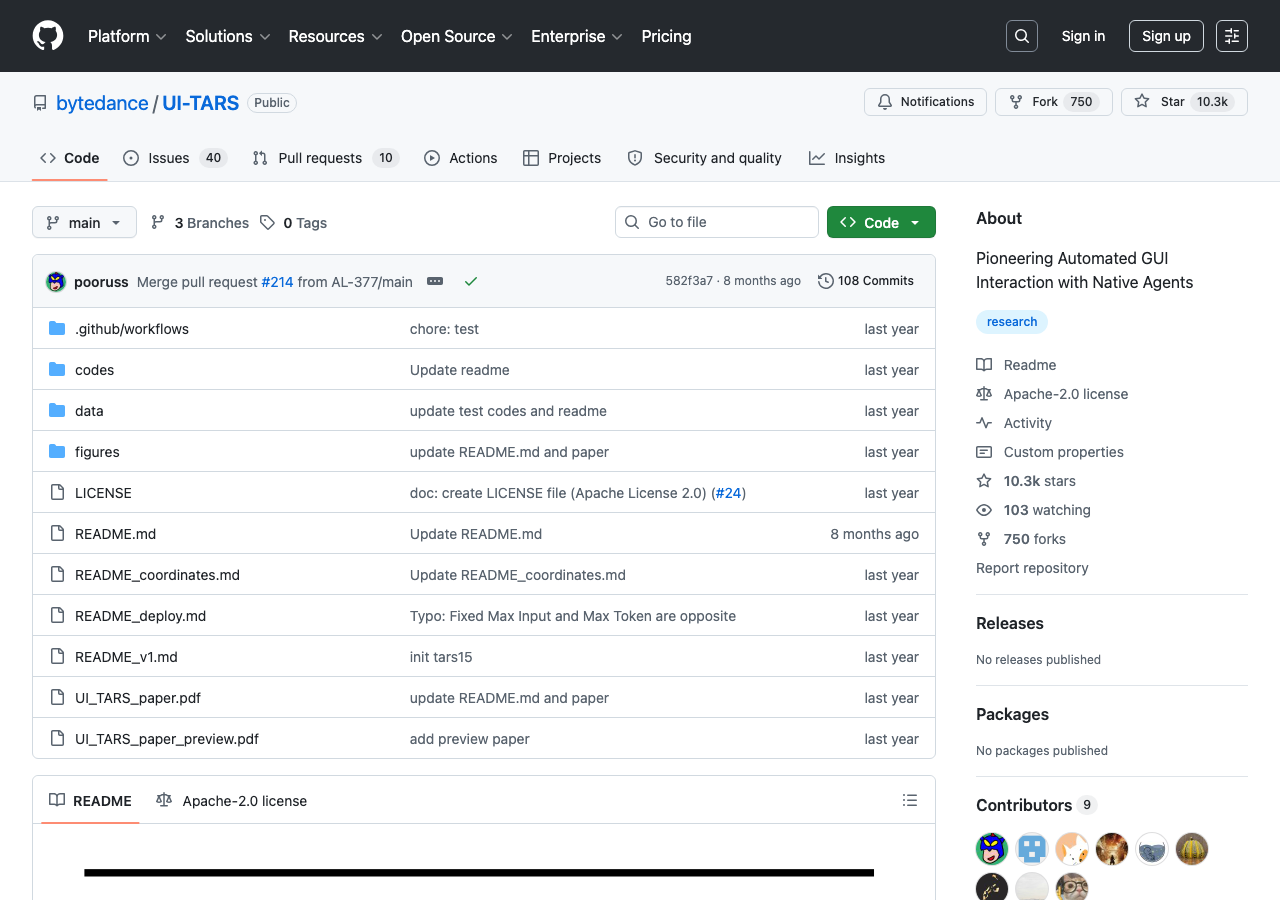

This week, bytedance/UI-TARS-desktop sat at GitHub trending #6 — and it did not get there alone. It rode in alongside Hmbown/DeepSeek-TUI at #1 (day-2 at 3,827 stars/day, positioned by its README as a “Claude Code killer”) and datawhalechina/hello-agents at #8. Pair that with Nathan Lambert’s Notes from inside China’s AI labs on Substack and Reddit r/technology’s The world is trying to log off U.S. tech (6,065 points), and the supply-side picture for May 2026 is clear: the Chinese model+tooling ecosystem is shipping faster than the Western one this week, and visual computer-use is one of its strongest beachheads.

UI-TARS-desktop is the visual-agent piece of that surge. It is open-source, multimodal, and built specifically to do what Anthropic’s Computer Use and OpenAI’s Operator do — see your screen, move your mouse, type on your keyboard — but with a different model lineage and a different licensing posture. This review walks through what UI-TARS-desktop is, how it performs against the two Western incumbents, and where it fits inside AgentConn’s existing computer-use coverage.

What UI-TARS-desktop Actually Is

The repository description is direct: “The Open-Source Multimodal AI Agent Stack: Connecting Cutting-Edge AI Models and Agent Infra.” The project ships two pieces — Agent TARS (a general multimodal stack across terminal, computer, browser, and product surfaces) and UI-TARS-desktop (the local-machine GUI agent). The desktop product is the one that sat on GitHub trending #6 this week and the one most operators will encounter first.

Under the hood, UI-TARS-desktop is driven by ByteDance’s UI-TARS family of models — most recently the Seed-1.5-VL/1.6 series and the UI-TARS-1.5 multimodal language model. The architecture is a pure visual-agent design: the model takes screenshots of the user’s screen, reasons over them, and emits mouse/keyboard actions back. There is no DOM access, no application API integration, no privileged hooks. The agent operates only through what it can see.

This matches the architectural family of Anthropic’s Claude Computer Use and OpenAI’s Operator — but with one distinction worth underlining: UI-TARS-desktop ships as an open-source desktop application, not as an API-only product or a hosted operator. You install it on your own machine. The model can be local or remote. The agent runs against your local screen.

The recent v0.2.0 release added two notable features: Remote Computer Operator and Remote Browser Operator — both free. That is the open-source-first packaging that operators in the Chinese-tooling cluster have been shipping all week.

The Cross-Source Evidence For Why It Matters Now

The reason to write this review this week — and not last month, when the underlying UI-TARS repo crossed 27K stars — is the cross-source convergence. Five independent vectors point in the same direction:

GitHub trending. Three Chinese repos in the top 10: DeepSeek-TUI #1, UI-TARS-desktop #6, hello-agents #8. The Chinese model+tooling cluster is dominating top-of-funnel developer attention.

Substack. Lambert’s Notes from inside China’s AI labs is the qualitative inside view of the same shift the GitHub trending captures quantitatively. Lambert’s read: the Chinese ecosystem is producing real software at speed, and the OSS publication cadence is higher than the Western one this week.

Reddit. The r/technology piece The world is trying to log off U.S. tech hit 6,065 points — the demand-side mirror of the supply-side surge.

X. A post from @jbhuang0604 — “Keep getting rate-limited by Claude, so I tried DeepSeek V4 — holy crap the cost” — drew 6,313 likes. That is the operator-level price-pressure mechanism behind the supply shift, captured in one tweet.

Tooling. farion1231/cc-switch — itself GitHub trending #3 this week — explicitly lists Claude Code and Codex and OpenCode and openclaw and Gemini CLI as switchable backends. The infrastructure for swapping in non-American agents is mature.

Five vectors, one realignment. That is the cross-source picture this review sits inside.

Performance: How It Stacks Up

UI-TARS is one of the few open-source visual-agent stacks with credible published benchmarks against the Western incumbents.

The numbers cited in VentureBeat’s writeup and confirmed in the ByteDance UI-TARS paper materials:

The numbers cited in VentureBeat’s writeup and confirmed in the ByteDance UI-TARS paper materials:

- VisualWebBench: UI-TARS 72B at 82.8%, GPT-4o at 78.5%, Claude 3.5 Sonnet at 78.2%. UI-TARS leads on web-page reasoning.

- OSWorld (50 steps): UI-TARS at 24.6, Claude Computer Use at 22.0. UI-TARS leads on general desktop tasks at higher step budgets.

- OSWorld (15 steps): UI-TARS at 22.7, Claude at 14.9. UI-TARS’ lead grows under tighter step budgets.

- OSWorld for newer UI-TARS-2: 47.5%. AndroidWorld: 73.3%. WindowsAgentArena: 50.6%.

- OpenAI Operator on OSWorld: 38.1% (set a record at launch but trailing UI-TARS-2).

- Claude Computer Use on SWE-bench Verified: 49% — a different benchmark family, but the comparison WorkOS pulled together is useful for shape: Claude is stronger on web/coding tasks, Operator on standalone online tasks, UI-TARS on cross-domain (web and mobile and Windows).

The mobile gap matters. Anthropic’s Claude Computer Use, by ByteDance’s own measurement, “performs strongly in web-based tasks but significantly struggles with mobile scenarios — the GUI operation ability of Claude has not been well transferred to the mobile domain.” UI-TARS is the only one of the three that ships strong cross-domain coverage out of the box. For agent operators whose use cases include a phone or a tablet, that is the structural difference.

Where UI-TARS-desktop Fits in the Stack

The framing this review wants AgentConn readers to take away is not “swap Claude for UI-TARS.” It is understand which agent is the right tool for which environment. Drawing from our existing computer-use comparison and the new benchmark data:

| Use case | Best agent | Why |

|---|---|---|

| Web research and form-filling, U.S. only | OpenAI Operator | Strongest standalone-online benchmark; managed environment |

| Desktop app automation on your own machine | Claude Computer Use OR UI-TARS-desktop | Both run locally; Claude has stronger ecosystem, UI-TARS has stronger benchmarks |

| Mobile + desktop + web cross-domain | UI-TARS-desktop | Only one of the three with strong mobile transfer |

| Open-source, self-hosted, no U.S. dependency | UI-TARS-desktop | Open weights, open repo, runs against any model backend |

| Highest-volume, cost-sensitive automation | UI-TARS-desktop | Free remote operators in v0.2.0; no per-action API price |

| Fastest commercial setup, no infra | OpenAI Operator | $200/month; no install required |

| Best browser-domain reasoning | UI-TARS or Claude (both >78% VisualWebBench) | UI-TARS leads slightly; both are above OpenAI |

The cost-sensitivity row is what is driving the operator defection signal we cited above. When a single high-volume browser agent is running thousands of steps a day, the API-priced incumbents become expensive faster than most operators expect. UI-TARS-desktop running locally — or against an inexpensive remote provider — is the open-source answer to that cost curve.

What’s Still Missing

UI-TARS-desktop is not a finished product. The honest review:

- Documentation density is lower than Anthropic’s. Anthropic ships polished how-to material for every Computer Use feature. UI-TARS’ docs are repository-centric — README plus inline SDK docs. Operators coming from Claude will feel the gap.

- Tooling ecosystem is younger. Claude has cc-switch, has the Claude Code agentic stack integration, has third-party MCP servers. UI-TARS-desktop is closer to “framework + CLI” than “ecosystem.”

- Safety reasoning is less developed. Anthropic ships safety filters and refusal patterns by default; OpenAI Operator has a managed-environment safety layer. UI-TARS is closer to a research-grade agent and expects the operator to apply guardrails. For consumer-facing or untrusted-environment deployments, that gap is real.

- English-language community is smaller than the Chinese-language community. Most of the operator commentary, recipes, and examples are in Chinese first, English second. datawhalechina/hello-agents is the canonical example: GitHub trending #8 this week, but the resource is Chinese-first.

These are gaps to know about, not reasons to skip the project. The license, the benchmarks, and the cross-domain coverage are the headline reasons to add UI-TARS-desktop to your evaluation list.

Setup Notes For AgentConn Readers

If you want to try UI-TARS-desktop today:

- Install the desktop app. Releases are at github.com/bytedance/UI-TARS-desktop/releases. macOS, Windows, and Linux builds are published.

- Pick a model backend. UI-TARS-1.5 weights are available on the open ByteDance model hubs; alternatively, point the desktop app at a hosted UI-TARS endpoint or a vision-capable model of your choice via the SDK.

- Try the v0.2.0 Remote Operators. Both Computer Operator and Browser Operator are free and a low-friction first run — you do not need to ship a local model just to evaluate the agent loop.

- For evaluation, run the same task on Claude Computer Use, OpenAI Operator, and UI-TARS-desktop. This is the only honest way to compare. Benchmarks tell you about the average case; your specific task will tell you about your case.

- Pair it with a multi-agent switcher like cc-switch if you want to keep Claude or Codex available alongside it. The paired-agent pattern works for visual agents the same way it works for coding agents.

What This Means For The Visual-Agent Category

The shape of the visual-agent market through Q3 2026 is now clearer than it was a month ago. Three credible production-grade systems exist: Claude Computer Use (best ecosystem), OpenAI Operator (best managed surface), UI-TARS-desktop (best benchmarks + open-source). Each occupies a distinct lane. The category is not winner-take-all.

The harder question is what happens to the next round of entrants. Visual computer use was, six months ago, a category with two American incumbents. Today it is a category with two American incumbents and one open-source Chinese challenger that beats both on the cross-domain benchmark. By the time the next round of model launches lands, the lane structure will not be optional — every operator stack will have to take a position on which agent runs which screen.

UI-TARS-desktop is the open-source position. The cross-source signals this week are saying that position is mature enough to deploy.

Related AgentConn coverage: Best AI Computer-Use Agents 2026 covers the broader market. DeepSeek-TUI: The Hermes Anti-Anthropic Stack covers the coding-agent counterpart in the same Chinese-tooling surge.