GenericAgent and EvoMap: How AI Grows Its Own Skill Trees

GenericAgent and EvoMap hit 800+ GitHub stars/day building AI that grows its own skill trees. How each works, and the security risk nobody mentions.

GenericAgent and EvoMap: How AI Grows Its Own Skill Trees

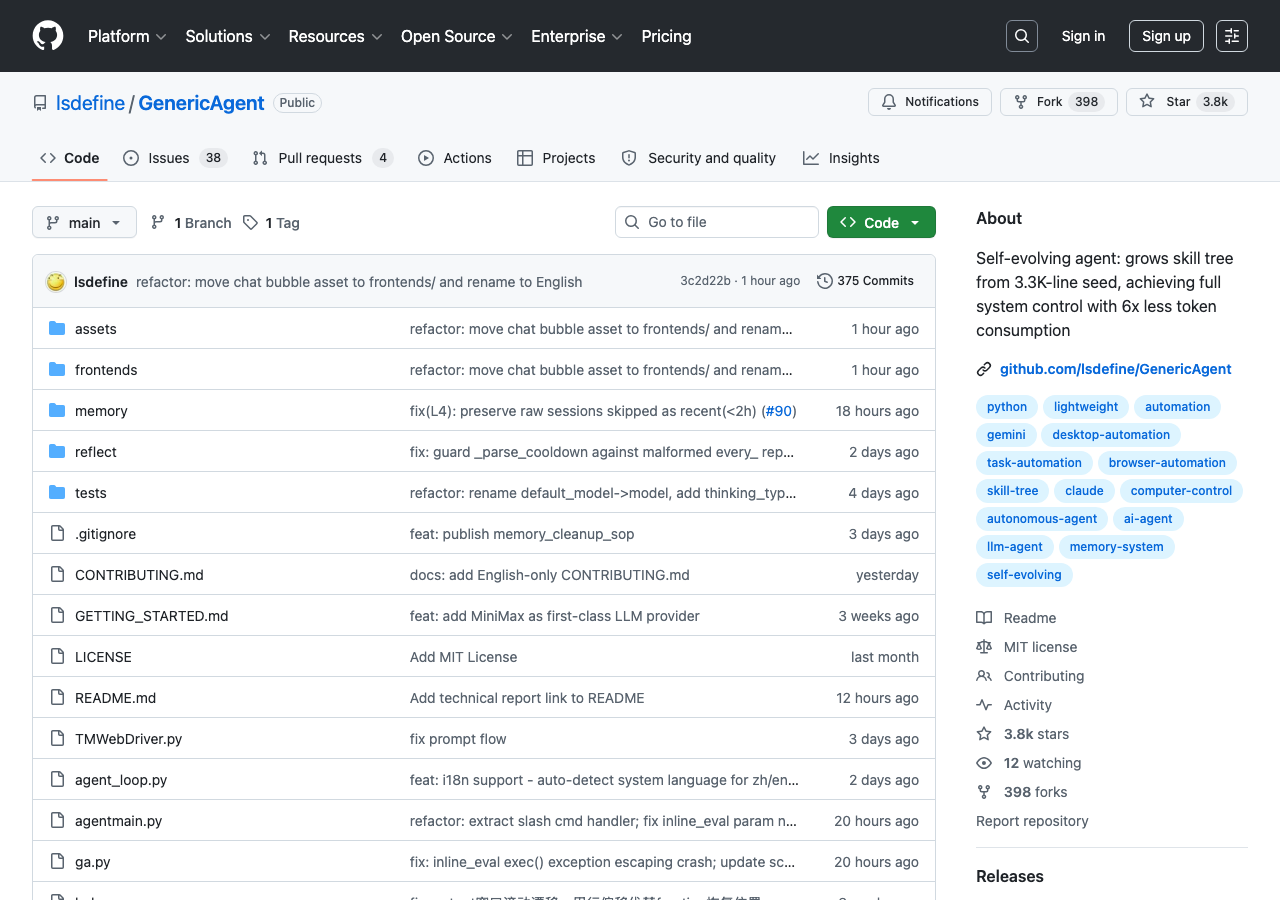

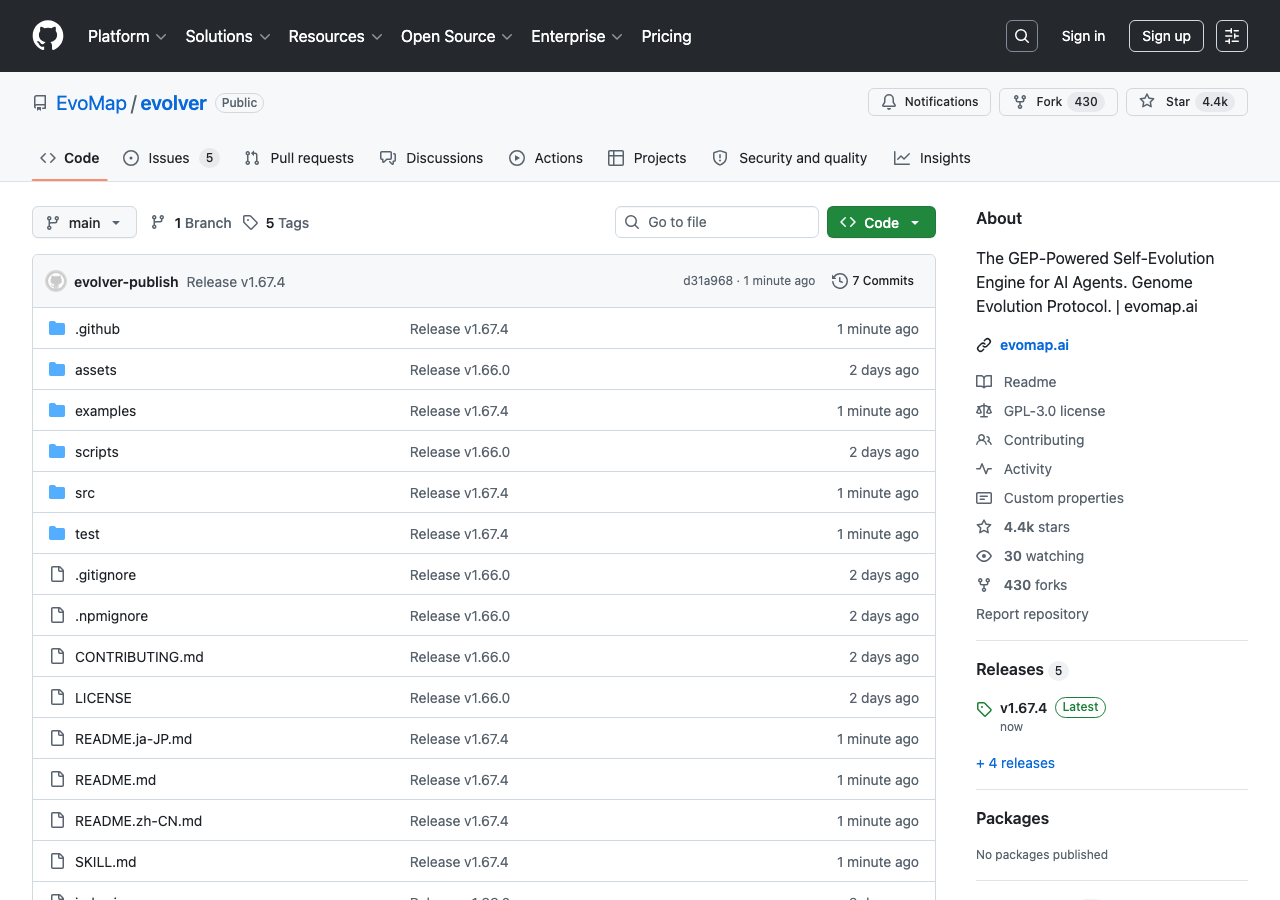

GitHub trending today: two repos at 800+ stars per day, both built around the same idea. GenericAgent hit 848 stars Thursday. EvoMap/evolver hit 750. Both describe agents that accumulate capabilities from their own execution history. Neither one retrains a model. Both are working in production deployments.

The category is called self-evolving agents. It’s been an academic concept since 2023. What’s different in April 2026 is that the implementations are arriving, they’re open-source, and the organic velocity suggests the developer community is treating them seriously — not as research curiosities, but as infrastructure.

This article covers what GenericAgent and EvoMap actually do technically, how they differ from each other and from older approaches, the security exposure they create, and what the current evidence says about whether the “self-evolving” claim holds up.

What Self-Evolving Agents Actually Are (and Aren’t)

The phrase “self-evolving” is doing a lot of work. Before going further, the distinction that matters most:

None of these systems modify model weights. GenericAgent, EvoMap, OpenSpace, and the other repos trending this week are not fine-tuning their underlying LLMs. They are not doing RL on the fly. They are accumulating structured artifacts — skills, gene fragments, capsules, playbooks — as external memory that grows over time.

Two recent academic surveys have converged on the same framework for understanding this. The feedback loop is: agent executes a task → environment responds → optimizer extracts patterns → skill store is updated → next execution draws on those patterns. The agent gets more capable with each cycle not because the model improves, but because the tools available to the model improve.

This distinction matters for managing expectations. What these systems do extremely well: compress common task patterns into reusable primitives, avoid re-solving problems already solved, and reduce token consumption dramatically. What they don’t do: generalize to genuinely novel domains the underlying model couldn’t handle, or improve their reasoning. The “self-evolving” label is accurate for the skill layer. It’s misleading if applied to the model.

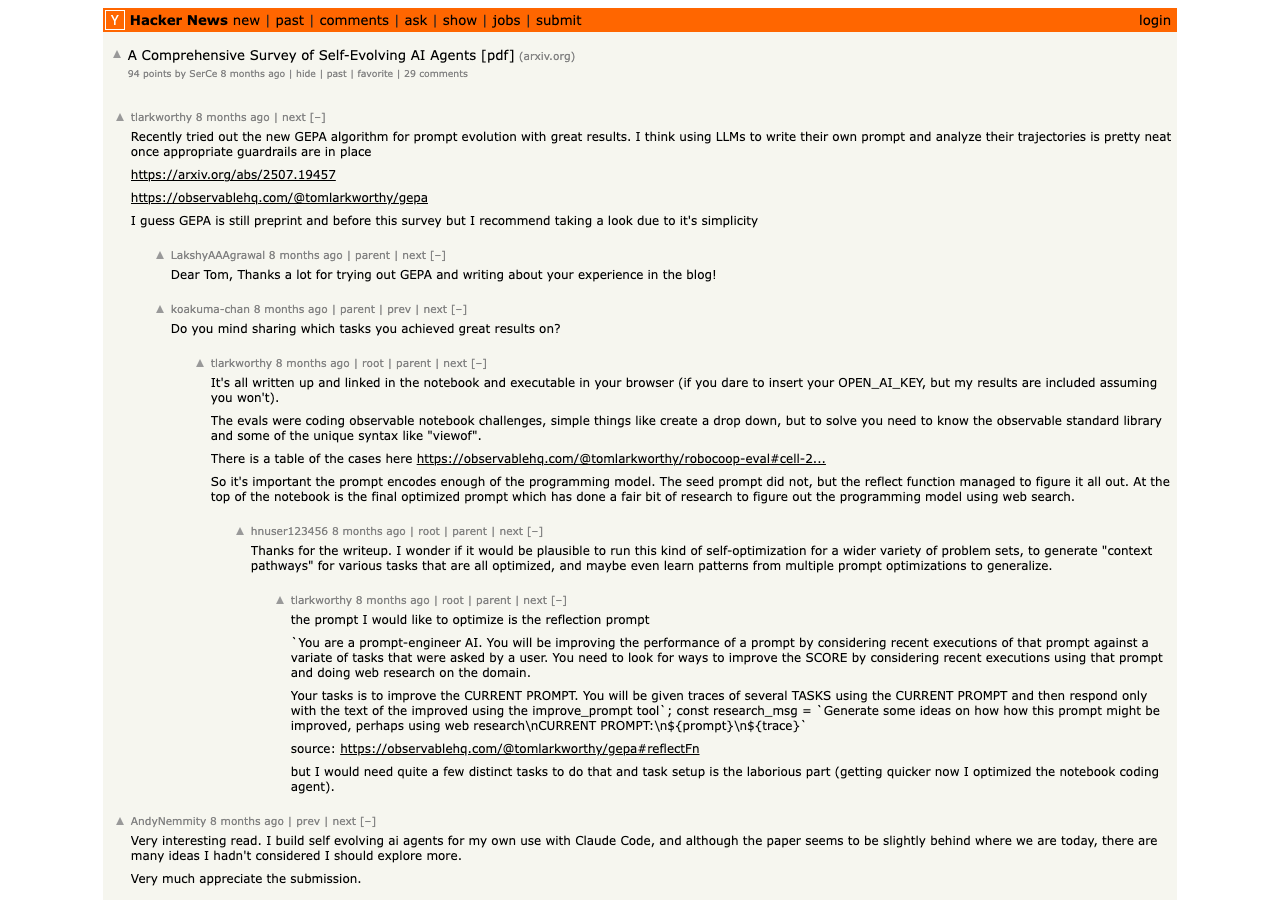

A Hacker News thread on the comprehensive survey paper (94 points, 29 comments) laid this out plainly: the skeptic position is that LLMs can’t truly learn without fine-tuning or RL — “self-improvement is really prompt/tool optimization, not weight updates.” The practitioner position, from people who’ve actually built these systems, is that process recursion (skill accumulation, which works) is genuinely valuable even if it’s not weight modification (genuine learning, which requires training).

Both are correct. The skill-tree approach is a real capability improvement. Just not the one the marketing usually implies.

GenericAgent: The Skill Tree Approach

GenericAgent makes its design philosophy explicit in the README: “grows a skill tree from a 3,300-line seed, achieving full system control with 6x less token consumption.”

The architecture is minimal by design. Nine atomic tools (browser, terminal, filesystem, keyboard/mouse, screen vision, mobile ADB) sit beneath a ~100-line agent loop. No preloaded skill library. No custom tooling scaffolding. The skills emerge from execution.

Every time the agent successfully completes a task, it crystallizes the approach into a reusable skill. The next time a similar task appears, the agent retrieves the relevant skill from its tree rather than reasoning from scratch. Over time the tree grows — from that 3,300-line seed to a comprehensive library of the agent’s accumulated operational knowledge.

The “6x less token consumption” claim is the most concrete assertion in the README and the one worth examining. It’s not a performance improvement — it’s an architectural consequence. Most competing agentic frameworks (the LangChain and AutoGPT lineage) maintain running context windows that balloon as tasks get complex. Context windows at 200K-1M tokens are normal in production deployments. GenericAgent operates in under 30K tokens by design. Each skill retrieval replaces what would otherwise be a multi-turn planning session burning context.

This is the real competitive claim: skill accumulation as a context compression strategy. It trades generalizability (the model can’t use skills it hasn’t crystallized yet) for efficiency (the model doesn’t burn tokens re-solving problems it’s seen before).

The April 2026 update added L4 session archive memory and scheduler/cron integration — meaning skills can now persist across sessions and agents can execute scheduled tasks autonomously. The agent bootstraps itself entirely; no manual terminal commands during initial setup.

Supported backends: Claude, Gemini, Kimi, MiniMax. Frontend integrations: WeChat, Telegram, Feishu, DingTalk, QQ — which explains the Chinese developer community’s early adoption and some of the star velocity.

For context on where this fits architecturally: Archon’s harness approach solves determinism through structured YAML workflows that constrain the AI’s execution path. GenericAgent solves efficiency through accumulated skills that replace expensive planning. Different problems, complementary solutions — a team could use both.

EvoMap/evolver: The Genome Evolution Protocol

EvoMap/evolver takes the same core concept — skills accumulating from execution — and frames it explicitly in biological terms. The README: “Evolution is not optional. Adapt or die.”

The GEP (Genome Evolution Protocol) treats agent behaviors as genes. Successful approaches are encoded as “gene fragments” stored in genes.json and capsules.json. The evolution pipeline scans execution logs, selects assets worth preserving, generates updated GEP prompts, and writes an audit trail to events.jsonl.

The biological analogy runs deeper than metaphor. Gene fragments can be shared between agents, inherited across model backends, and composed into new behaviors — similar to how biological organisms inherit and recombine genetic material. The EvoMap Hub (optional) provides a Skill Store where teams can fetch and share reusable skills: node index.js fetch --skill <id>.

What’s different from GenericAgent’s approach:

- Explicit audit trail. Every evolution event is logged. You can inspect what changed, when, and why.

- Rollback via Git. The system requires Git and uses it for rollback + blast-radius calculation when an evolution step goes wrong.

- Offline by default. No external API dependency for the core evolution loop.

- Safety gate. Commands must use node/npm/npx prefix; 180-second timeout enforced.

The four evolution strategies — balanced, innovate, harden, repair-only — let operators tune the system’s risk appetite. A production deployment running critical workflows would use repair-only. An exploration environment testing new capability territory would use innovate.

This is the important design tension. EvoMap/evolver is more conservative than GenericAgent by default — it assumes the evolved gene pool needs governance, not just accumulation. Whether that’s a feature or a limitation depends on the deployment context.

The SOUL.md pattern for agent identity persistence maps naturally onto EvoMap’s gene architecture: the identity spec functions as a stable gene that doesn’t evolve, while operational skills evolve freely around it.

OpenSpace and the Benchmarks That Matter

OpenSpace (5,400 stars, 656 forks) provides the most concrete benchmark data in the self-evolving agent space. The GDPVal benchmark — 50 professional tasks across compliance, engineering, and document generation — is the most controlled evaluation available.

Results: 4.2x higher income versus baseline agents using the same LLM backbone. 46% fewer tokens on real-world professional tasks. $11,484 of $15,764 possible earned in 6 hours on the benchmark ($11K / 72.8% capture rate).

The $11K-in-6-hours number will get quoted in headlines. The 46% token reduction is the more reliable signal — it’s reproducible across runs and doesn’t depend on benchmark weighting.

OpenSpace’s three evolution modes mirror what the best-performing implementations do in practice:

- FIX: repair broken instructions (the most common production use case)

- DERIVED: create specializations from successful generalist skills

- CAPTURED: extract patterns from successful executions

Nous Research’s Hermes/GEPA approach is the most technically sophisticated variant. GEPA (Genetic-Pareto Prompt Evolution) analyzes failure causes rather than just detecting failures, then proposes targeted text mutations. No GPU required. $2-10 per optimization run. If this cost curve holds at scale, self-evolving skill optimization becomes commodity infrastructure.

VentureBeat covered Memento-Skills (April 8) as the enterprise-friendly version of the same concept: evolving external memory that enables progressive capability improvement without model modification. The quote from co-author Jun Wang is worth noting: it “adds its continual learning capability to the existing offering in the current market, such as OpenClaw and Claude Code” — framing these as infrastructure additions, not replacements.

The Security Surface

This is where the coverage gets selective.

Two repos at 800 stars/day about agents that can write and execute their own skills is also a description of agents that can write and execute arbitrary code with system-level access. The community is excited about the capability story. The security implications are not receiving equal coverage.

An arXiv paper (2602.12430) is the clearest statement of the exposure: 26.1% of community-contributed skills contain vulnerabilities. The paper covers skill acquisition pathways, progressive disclosure mechanisms, and what a four-tier gate-based permission model would look like. The vulnerability finding isn’t a theoretical concern — community skill registries are already being used for credential exfiltration.

ISACA’s April 7 analysis (Richard Beck, Director of Cyber, QA) catalogs four specific risk categories:

- Visibility gaps. Agents using legitimate credentials blend into normal traffic and defeat traditional security tooling.

- Prompt-layer compromise. Instructions embedded in emails, documents, or web content hijack agent reasoning.

- Supply chain. Unvetted skill registries already distributing malicious packages.

- Direct vulnerabilities. RCE flaws and weak auth, amplified by agent autonomy.

The stat that should be in every write-up about self-evolving agents: 1 in 8 companies reported AI breaches linked to agentic systems in ISACA’s 2026 threat report. A researcher demo showed an AI agent autonomously compromising a hardened OS in under 4 hours.

Apiiro’s code execution risk research adds the blast-radius calculation that makes this visceral: in simulation, a single compromised agent poisoned 87% of downstream decision-making within 4 hours. Self-modifying agents compound this — evolved skills may introduce attack vectors not present in the seed code.

This is the supply chain attack surface for AI agents in its most direct form. When the agent can write its own tools, the skill store is the attack surface. GenericAgent and EvoMap both ship with some safety gating (EvoMap’s command whitelist and 180-second timeout; GenericAgent’s atomic tool boundaries). Neither ships with a skill registry vetting process.

The question to ask before deploying either: What’s the worst my agent can do, and is that OK?

Where This Fits: Practical Assessment

The “RIP Pull Requests (2005-2026)” framing from Latent.Space is directionally correct but premature. Stripe is producing 1,300 agent-written PRs per week — with human review still in the loop. The workflow is changing. The audit layer hasn’t collapsed.

The honest assessment of where self-evolving agents fit in a production stack today:

Use cases where skill accumulation pays now:

- Autonomous debugging loops where the agent encounters the same class of failure repeatedly

- Code generation pipelines for standardized patterns (config changes, dependency upgrades, minor refactoring)

- Research scaffolding for structured tasks with repetitive retrieval patterns

Use cases where you need more than current implementations offer:

- Novel domain problems the model hasn’t seen (no crystallized skills to draw on)

- High-security environments where unvetted skill execution is unacceptable

- Tasks requiring genuine reasoning improvement (skill trees don’t improve reasoning, only pattern retrieval)

The most useful framing comes from the HN recursive self-improvement thread: practitioners distinguish “process recursion” (skill accumulation, which works) from “weight modification” (genuine learning, which doesn’t work without training). GenericAgent and EvoMap do the former. That’s genuinely valuable — just not the science fiction version of self-improvement that the category name implies.

The prior generation of self-evolving work — MiniMax M2.7 and Darwin-Gödel — focused on weight-level adaptation. These new repos have moved the practical frontier to skill-level accumulation. Less dramatic, more deployable.

What to Watch

Three signals worth tracking as this category matures:

The security tooling gap. Capability is shipping faster than governance. A skill registry vetting process analogous to npm audit or pip safety doesn’t exist yet. When it does — and it will — it will reshape how teams deploy these systems.

The context compression thesis. GenericAgent’s core claim (6x token reduction via skill trees) is the most testable and commercially significant assertion in the category. Independent benchmarks at production scale will either validate or falsify it in the next quarter.

The convergence signal. When GitHub trending, HN, X/Twitter discourse, and the Latent.Space newsletter all point the same direction in the same day, the signal isn’t “agents are interesting.” It’s “agents are already the infrastructure.” The self-evolving repos trending today are leading indicators. Production implementations are 6-12 months out.

Sources: GenericAgent on GitHub · EvoMap/evolver on GitHub · HKUDS/OpenSpace on GitHub · NousResearch GEPA · arXiv: Comprehensive Survey of Self-Evolving AI Agents · arXiv: Skill Vulnerability Research (2602.12430) · HN: Self-Evolving AI Survey (94pts) · HN: Recursive Self-Improvement thread · VentureBeat: Memento-Skills · ISACA: Agentic AI Security · Apiiro: Code Execution Risks · Latent.Space: RIP Pull Requests

Stay in the loop

Stay updated with the latest AI agents and industry news.